How smart can AI become?

Plus: the US leads Europe in AI adoption, EV batteries have become 99 percent cheaper, and more

How smart can AI become?

There’s little reason to think that AI couldn’t become smarter than humans. But how much smarter? On Sunday, François Chollet initiated a debate on that question (quotations have been lightly edited for clarity):

One of the biggest misconceptions people have about intelligence is seeing it as some kind of unbounded scalar stat, like height. ‘Future AI will have 10,000 IQ’, that sort of thing. Intelligence is a conversion ratio, with an optimality bound. Increasing intelligence is not so much like ‘making the tower taller’, it’s more like ‘making the ball rounder’. At some point it’s already pretty damn spherical and any improvement is marginal.

Now of course smart humans aren’t quite at the optimal bound yet on an individual level, and machines will have many advantages besides intelligence – mostly the removal of biological bottlenecks: greater processing speed, unlimited working memory, unlimited memory with perfect recall… but these are mostly things humans can also access through externalized cognitive tools.

I do believe that a large collective of the smartest humans, aided by external tools, sits very close to the optimality bound – i.e. humans should be able to solve any solvable problem (where the required information is available) if they pay enough attention to it.

This received plenty of responses. alz:

This is basically my view: infinite free intelligence will not hyperaccelerate science (though it may well put all the humans out of a job!) because science in 2026 is not actually intelligence-bottlenecked. We have basically enough intelligence already.

However, I don’t think this follows from François’s tweets. Here’s Toby Ord’s response to him:

So it sounds like you are using a definition of intelligence where time is factored out (so you wouldn’t count a society that could discover calculus in 5 minutes as more intelligent than one that took 300,000 years).

. . .

And it would make the consequences of your claim weaker than people would assume. i.e. it is compatible with this that humans are 99 percent (or 100 percent) of perfect intelligence, yet future AIs could run rings around us, inventing the next thousand years of technology within months, etc.

So François’s claims are consistent with AI hyperaccelerating science. alz misinterpreted him in exactly the way Toby warned about, suggesting he wasn’t clear enough.

Ryan Greenblatt makes a point similar to Toby’s:

I probably agree with a claim like ‘human civilization with cognitive tools and unlimited time and attempts could eventually reproduce any specific cognitive feat AIs could do’, but the quantitative factor may be extremely large!

We are nowhere near the limits on how much cognitive firepower is possible.

Notice that Ryan replaces the word ‘intelligence’ with ‘cognitive firepower’, which carries fewer associations. Many debates get stuck over definitions. When that happens, it’s often a good idea to reformulate your claims in other terms. ‘Cognitive firepower’ is well-chosen, since it makes his claim hard to dispute. Clearly, we’re far from maximum cognitive firepower.

Ryan also gives another argument, referencing human individual differences:

AIs could have way higher data efficiency/learning speed/fluid intelligence than humans. Humans vary wildly in these attributes and there isn’t a clear cliff/sigmoid in the human population!

Máté Bedő makes the same argument:

I find it strange that smart people look at how smart the average human is and then consider how smart von Neumann was with macroscopically indistinguishable thinking parts (running on 20W) and they conclude there is minimal headway for improvement for terawatt minds.

However we define the word ‘intelligence’, sufficiently advanced AI would have a tremendous real-world impact. There’s an argument that such AI is still far away, but I don’t think there’s an argument that its impact would be moderate.

The US leads Europe in AI adoption

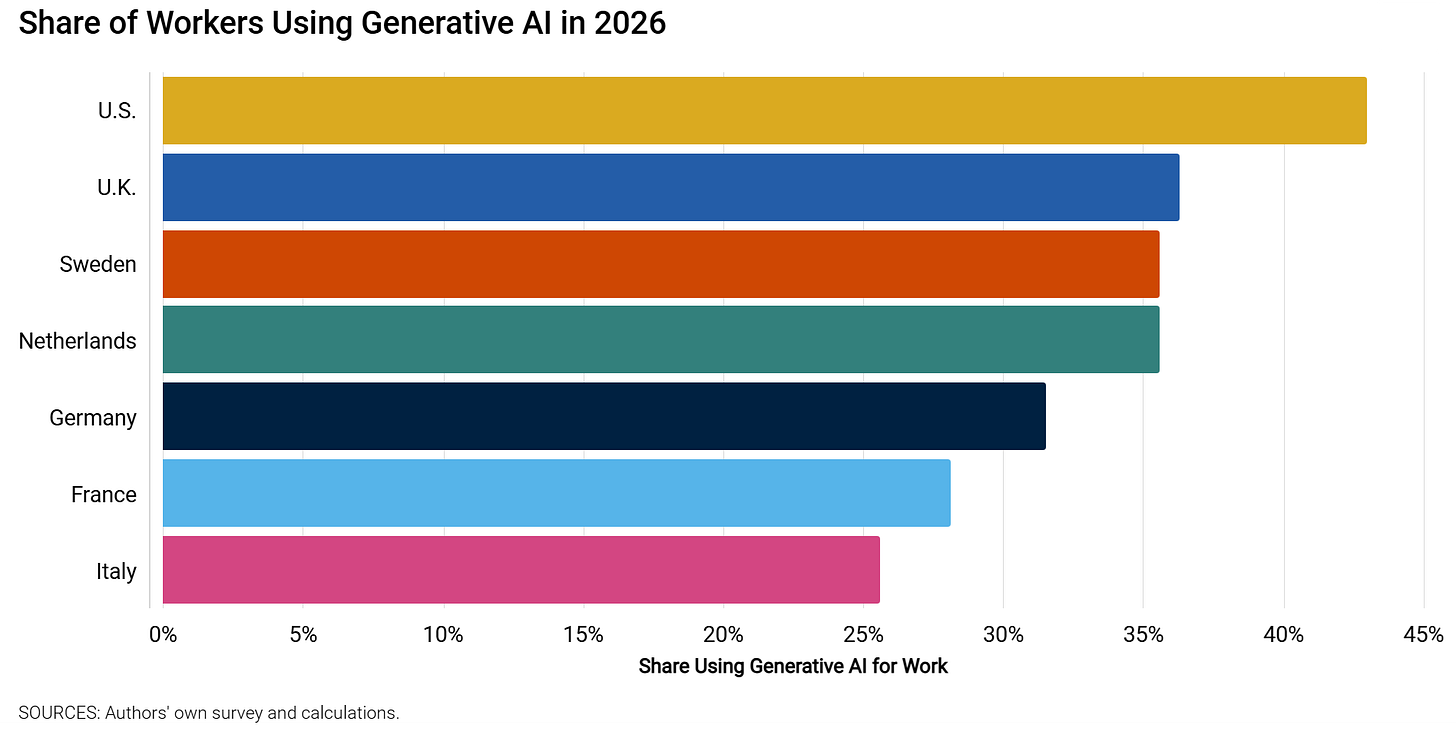

A new paper from the St Louis Fed finds that a larger share of workers use AI in the US than in Europe.

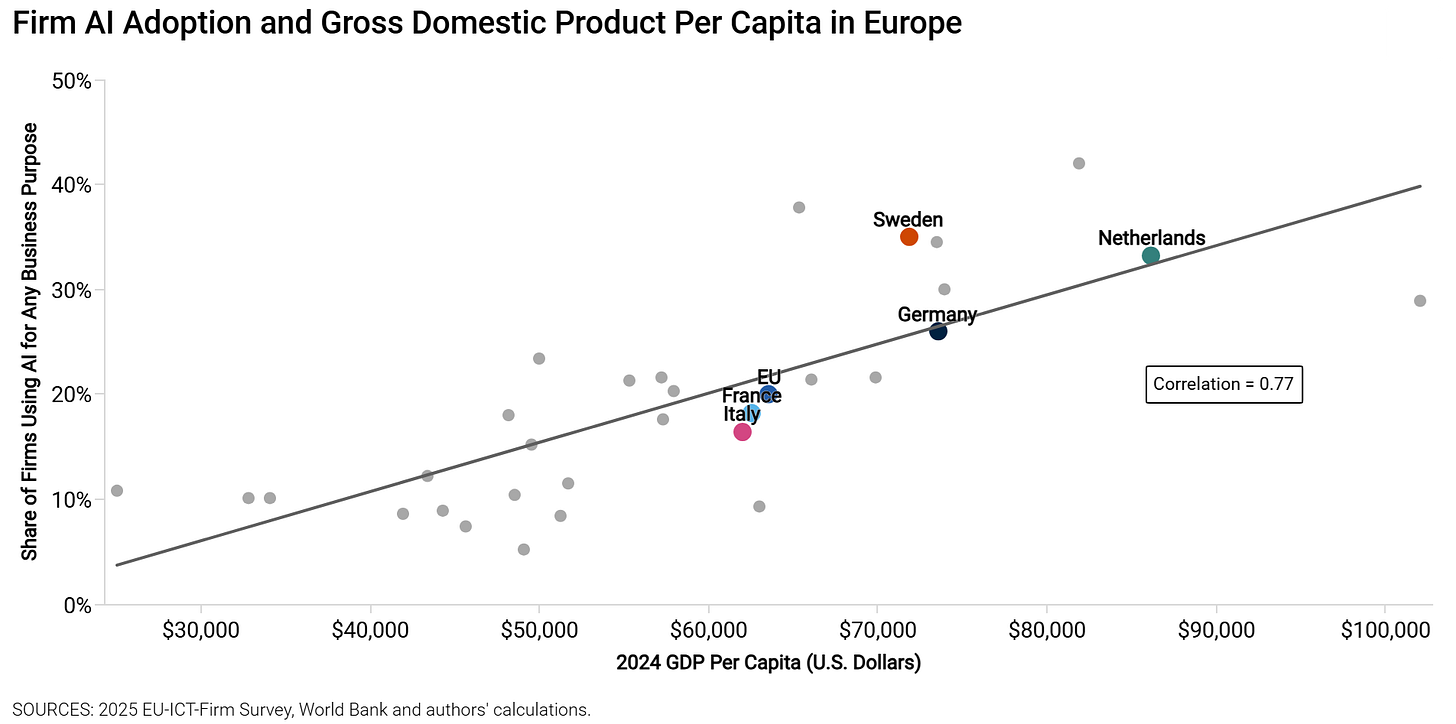

Within Europe, people in richer countries use AI more:

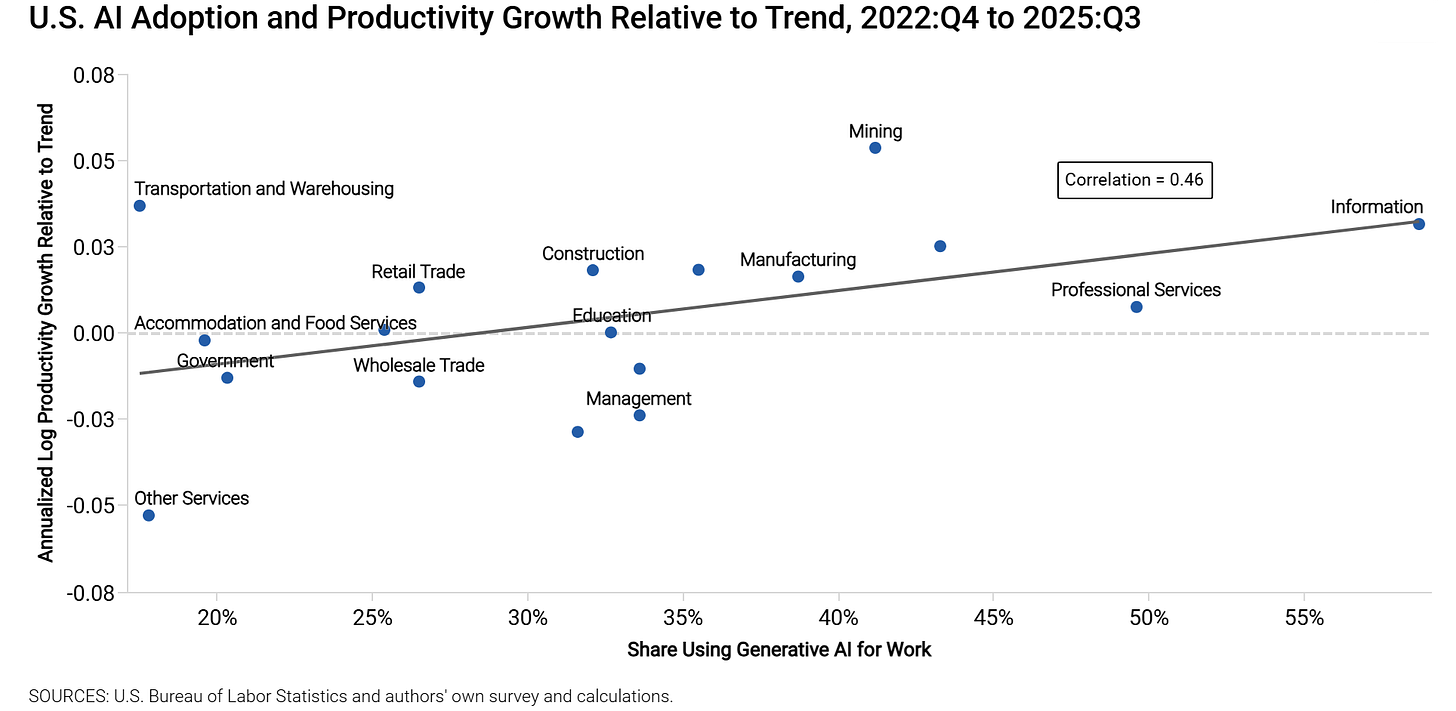

There are differences across industries, but perhaps not the ones you’d have expected. Many have argued that AI would disproportionately affect white-collar industries, but this study doesn’t seem to bear that out.

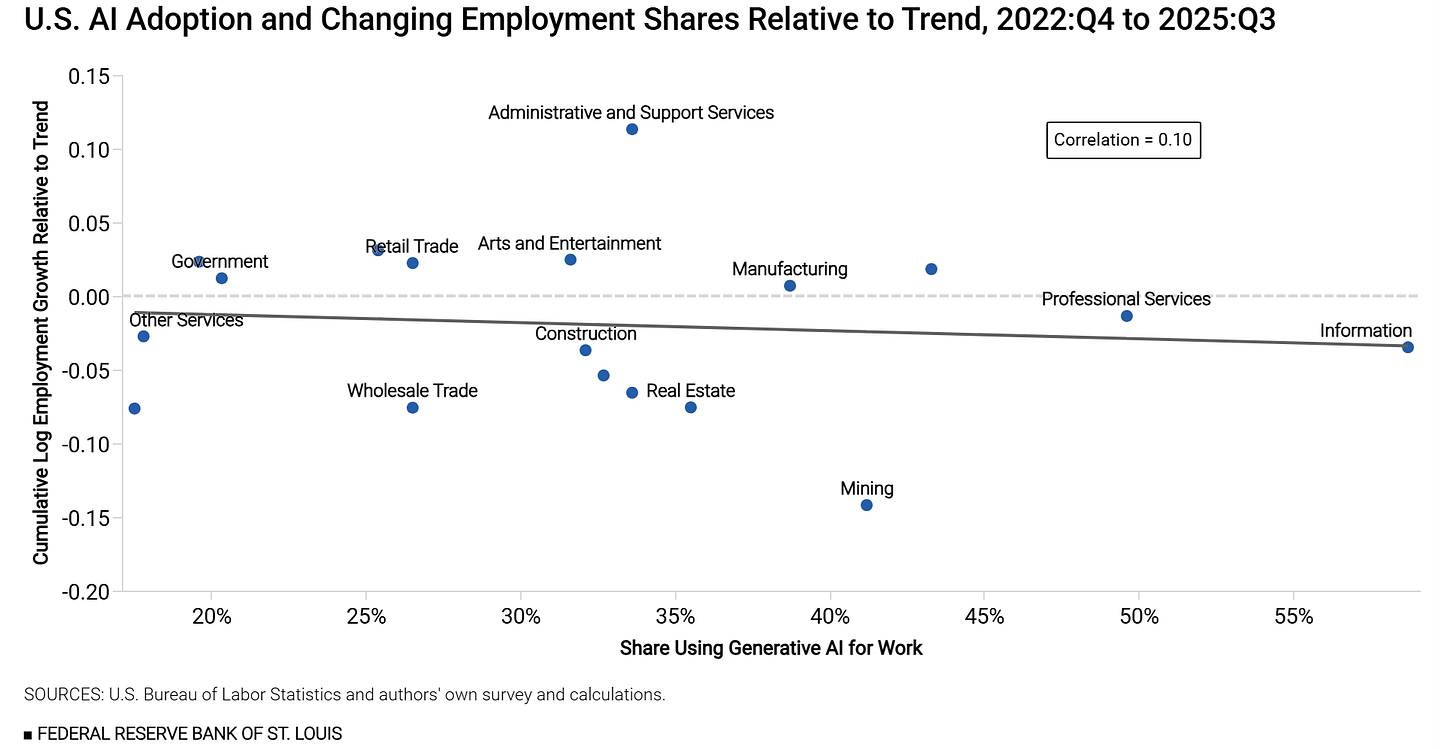

Note also that there’s no clear evidence that AI adoption is affecting employment at the industry level.

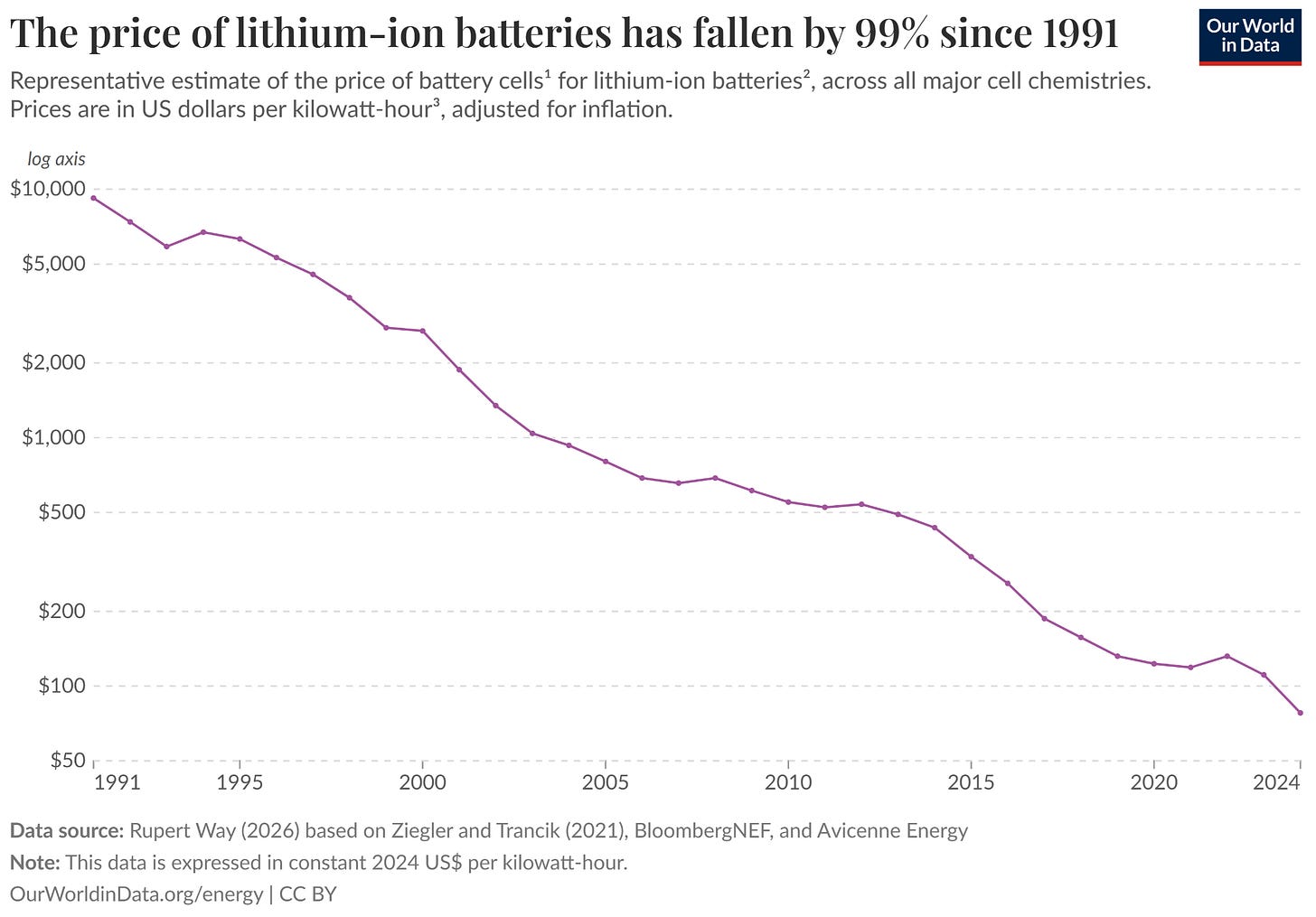

Electric vehicle batteries have become 99 percent cheaper

In China, some of the best-selling electric vehicles now cost as little as $10,000. How have they become so cheap? The key reason is the falling cost of batteries: 99 percent in the last three decades.

How microbubbles can carry drugs to the right place in the body

Standard methods often fail to deliver drugs to their targets, especially the brain. In Works in Progress, Ambika Grover writes about a possible solution: microbubbles, tiny gas-filled spheres injected into the bloodstream. Doctors direct ultrasound at the target – such as a tumor or clot – bursting the bubbles to release the drugs where they’re needed. This spectacular technology could improve the treatment of everything from stroke to brain cancer.

In brief

Iran is now earning nearly twice as much from oil sales as it did before the war

US troops in the Middle East relocate from bases to hotels and offices to avoid Iranian attacks

Tech philanthropy isn’t nearly big enough to replace government science cuts in the US

How the quest to amplify electromagnetic signals led to four major inventions

YouGov retracts poll on surging church attendance among young Brits over fraudulent responses

GiveWell uses AI to red team its research with mixed results

Data centers aren’t raising the temperatures of the surrounding land

Stuart Ritchie and Tom Chivers on studies about power posing that failed to replicate

People believe we’re less cooperative than we used to be, but the opposite is true

Paper claiming liberal reforms harmed the Global South receives plenty of criticism

How Demis Hassabis tested Mark Zuckerberg and went with Google instead