The preference to be served by a robot

Plus: more progress on crime, the roots of aesthetic modernism, and more

Welcome to The Update. In today’s issue:

The preference to be served by a robot

The New York Times reports on how Waymo’s robotaxis are changing behavior in Los Angeles, as parents who previously drove their teenage children themselves now rely on Waymo instead. Parents have been reluctant to use conventional ridesharing services, both because of safety worries and because they often turn down minors. But you don’t have those problems with a self-driving car. While it’s still not legal to use self-driving cars in this way in California (unlike in Phoenix), that hasn’t stopped parents from using them.

In debates about job automation, it’s often argued that even if robots or AI systems were to become cheaper or more capable than human workers, consumers would still prefer to interact with a human. There are no doubt such cases – but I think the converse will often be true as well. It’s not just the parents of teenagers who prefer self-driving cars – as I’ve reported previously, this preference is widespread. Another example that comes to mind is psychotherapy – for many people, it may be less embarrassing to open up to an AI therapist than to a human. Similarly, older people who need help with daily hygiene could find a robot less intrusive than a human carer. As AI and robotics continue to progress, I expect us to discover that this phenomenon is pervasive. In my view, people often overestimate the extent to which consumer preferences will save human jobs.

More progress on crime

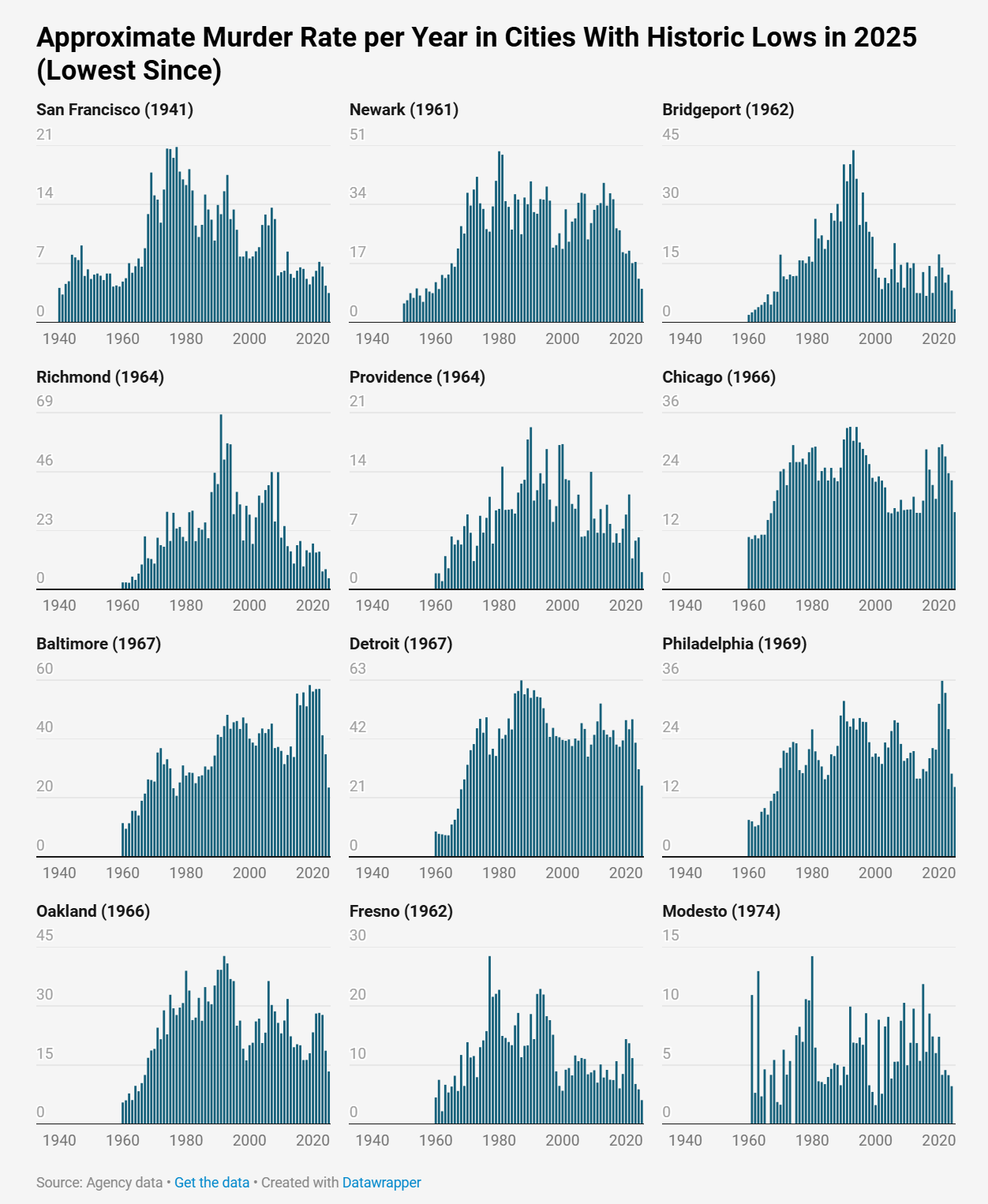

As I’ve reported previously, crime trends are down in many Western countries – and the positive reports keep coming in. In 2025, London had the lowest homicide rate since comparable records began in 1997. Ireland, a country of over five million, didn’t have a single gun killing. And as Jeff Asher shows in a great series of charts, many US cities had the lowest homicide rates in more than half a century.

A signaling story of modernism under scrutiny

In the twentieth century, aesthetic modernism made architecture, sculpture, and other arts strikingly austere. What can explain this? In a 2021 essay, Scott Alexander discussed the theory that as ornamentation became cheaper, you couldn’t use it to signal your wealth – and so rich people instead turned to signaling taste. But in an article for Works in Progress, Samuel Hughes argues that this theory doesn’t fit the facts.

Some art forms, such as sculpture, actually remained expensive – and so the theory can’t explain the turn from figurative sculptures. But a more fundamental problem is that most rich people weren’t particularly interested in modernist style. Instead, modernism was driven by artists and intellectuals.

Signaling explanations are popular in part because they have a gotcha element – giving you the sense that you’ve caught out the hypocrites red-handed. Unfortunately, it’s not an attitude that encourages detached truth-seeking. These explanations should be carefully scrutinized, just like any other. That’s precisely what Samuel has done in this article.

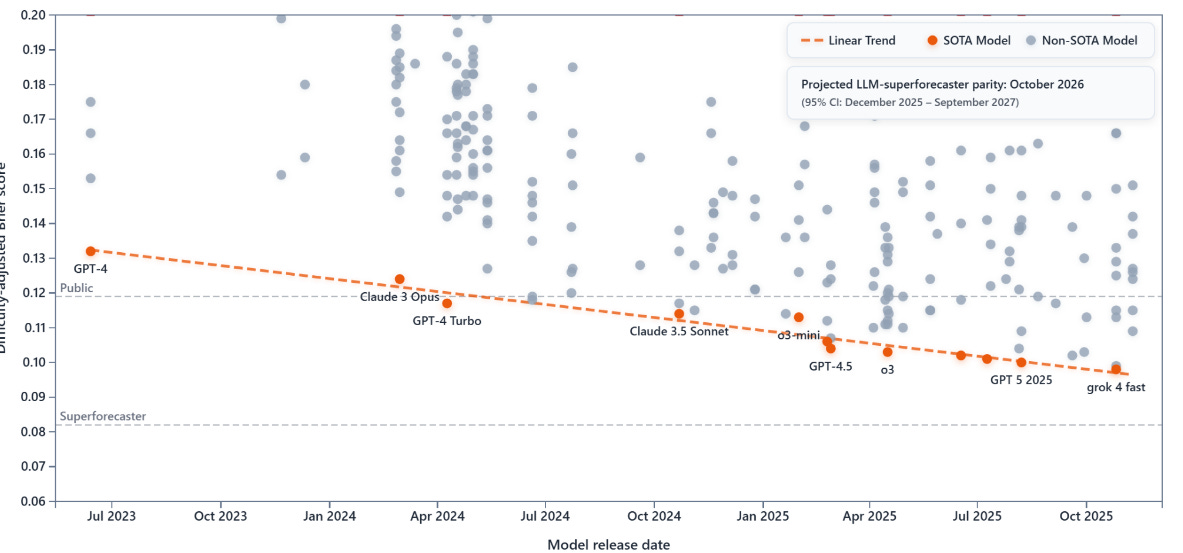

AI expected to reach human level at forecasting in October

The Forecasting Research Institute recently released a study of how AI models compare with human experts at forecasting. While none of the tested models managed to beat a team of superforecasters, they keep getting better. Extrapolation of the current trend would put AI models at parity with humans in October.

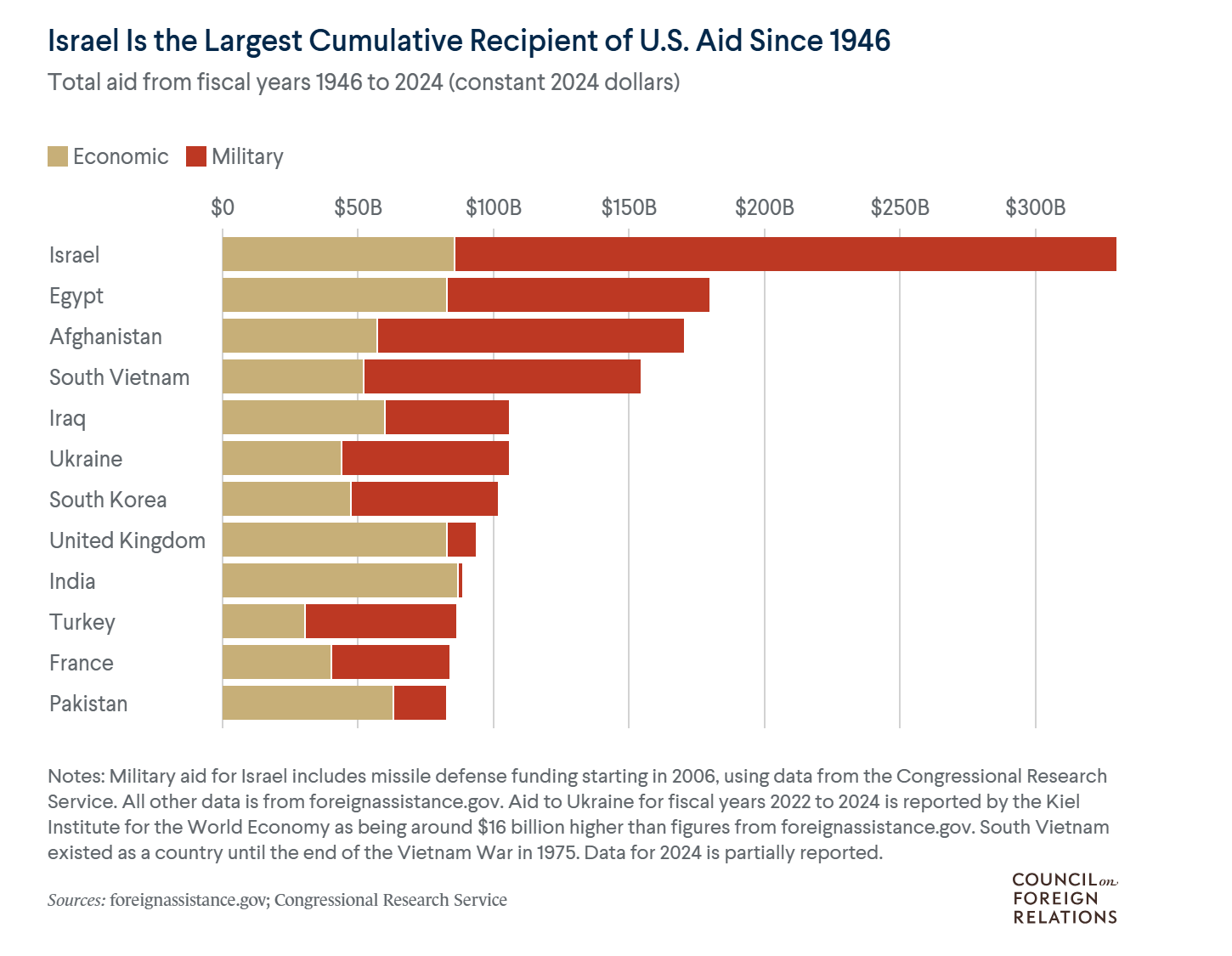

Netanyahu says Israel will phase out American aid

In an interview with The Economist, Israeli prime minister Benjamin Netanyahu says that he wants to fully end American military aid within the next ten years. This would be a historic shift, since Israel is the country that has received the most American aid since World War II. According to a report by William D Hartung, American military support to Israel during the first two years of the Gaza war was $21.7 billion or more, depending on how you count.

Betting on Anthropic

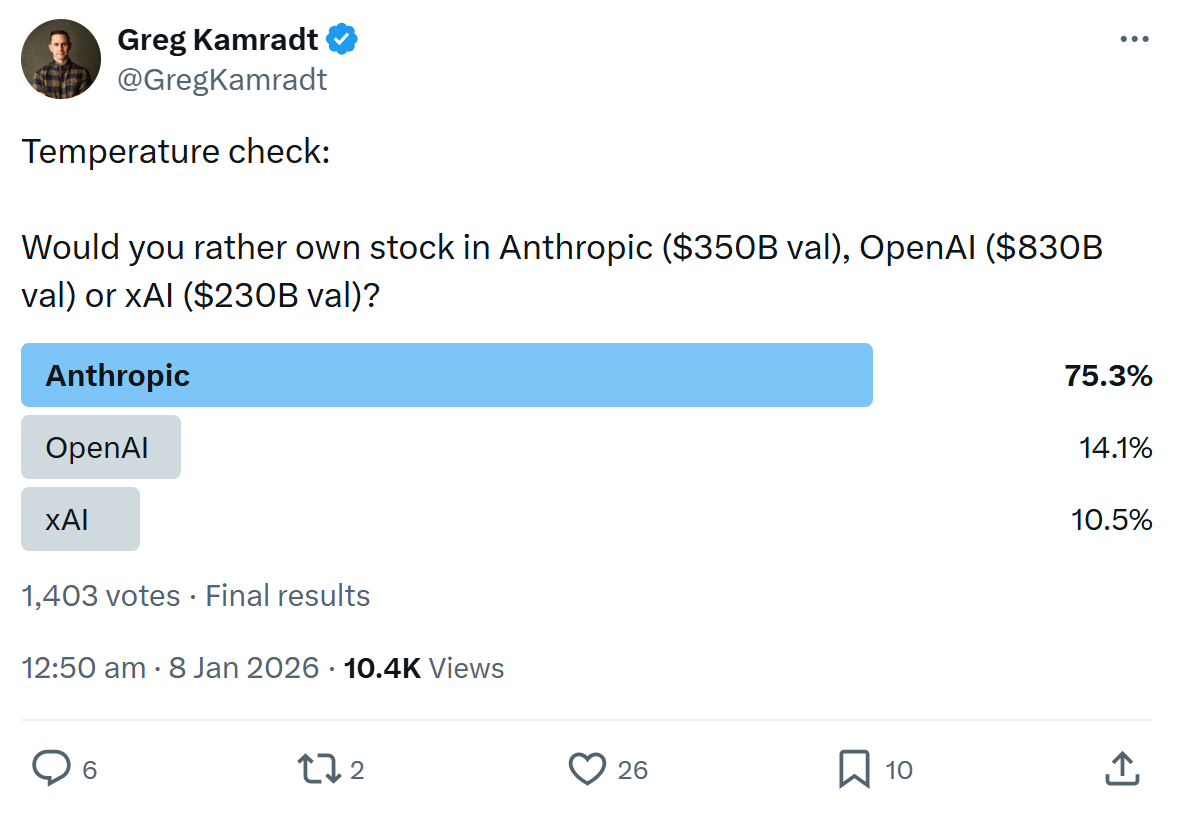

Recently, there’s been a lot of enthusiasm on X about Anthropic’s Claude Opus 4.5 – and especially Claude Code, which can execute various tasks (including non-coding tasks) autonomously. Consequently, there’s also a growing belief in Anthropic as a business on X. A recent poll asked: at current valuations, which private company (excluding Big Tech) would you rather invest in – Anthropic, OpenAI, or xAI? An overwhelming majority voted for Anthropic.

In brief

When the Royal Society was founded in 1660, it aimed for science to rest on publicly observable evidence – to ‘take nobody’s word for it’. But in an Asimov Press article on scientific methods, Andrew Hunt argues that we still fall short of this ideal, because the scientific prestige system doesn’t reward the sharing of methods the way it should.

Sentinel Global Risks Watch’s forecasters believe that Iran could soon face American military intervention. On average, they estimate a 59 percent chance that the US will strike by 31st March. That need not lead to regime change, however – they put the chance that Ayatollah Khamenei will be dead or out of power by then at 26.5 percent.

Tools for detecting AI-generated text have become more effective, with commercial detectors such as Pangram being especially reliable. Henry Shevlin argues that universities could use these tools to detect students who rely on AI for essays.

Matt Clancy has published a useful list of progress-related links and a Works in Progress essay on his personal experience of progress.

The Cato Institute has a request for research proposals on the psychology of progress. Applications close on 31st March.

That’s all for today. If you like The Update, please subscribe and share with your friends.

I'm a former superforecaster and while working at Metaculus I introduced the practice of recurring quarterly tournaments on short-term questions, which seems to have become a major source for these type of AI benchmarks and forecasting tournaments.

While I'm impressed with LLM forecasting performance in a general way, I don't find the specific headline findings of these benchmarks -- "AI expected to reach human level at forecasting in October" -- to be remotely interesting or important, and I'm mystified why anyone finds them convincing.

In general, I think the nonprofit forecasting space has suffered badly from its takeover by EA and I would not take the topline findings of any of these orgs very seriously on questions that overlap with "EA topics". Even before the takeover, Metaculus turns out to have been founded for futurist advocacy purposes by people with strong pre-existing views who don't know very much about practical forecasting, or really about practical anything. It would be nice if we had one of these that was actually run by people with a neutral "needs of the platform" ethos as opposed to being boosters for some predetermined external cause that they want supportive forecasting for.

On the preference to be served by a robot, I would strongly prefer home assistance (tidying, cleaning) from a robot over a person

I would think the more menial and personal the task, the more I would prefer to be served by a robot