AI economics needs to be weird

AI debates: the relational economy, propaganda predictions, and more

Welcome to The Update. Today, I’m covering some of the most important recent debates about the future of AI.

Will there be relational jobs in the weird world of advanced AI?

Experts don’t expect a robot expansion to transform the economy

In brief: possible deal between Pentagon and Anthropic, new book on messy jobs, and more

Will there be relational jobs in the weird world of advanced AI?

Economists keep devoting more and more attention to the long-run impact of AI. A few weeks ago, I covered the debate over the Forecasting Research Institute’s survey on AI-fueled growth. Now, one of the contributors to that debate, Alex Imas, has published an essay on how the labor market could evolve as AI becomes more advanced. It’s a model of science communication, using the conceptual machinery of economics in a way that’s accessible to a general audience.

When farming was mechanized, employment shifted to manufacturing. When manufacturing became more automated, it moved on to service jobs. But where will it go if AI can do office work and many other services more efficiently than humans?

Alex’s answer is the relational sector – jobs like teaching, therapy, hospitality, and art. He argues that people are attracted by scarcity, and that even in a world of abundance, one thing remains scarce: genuine human involvement.

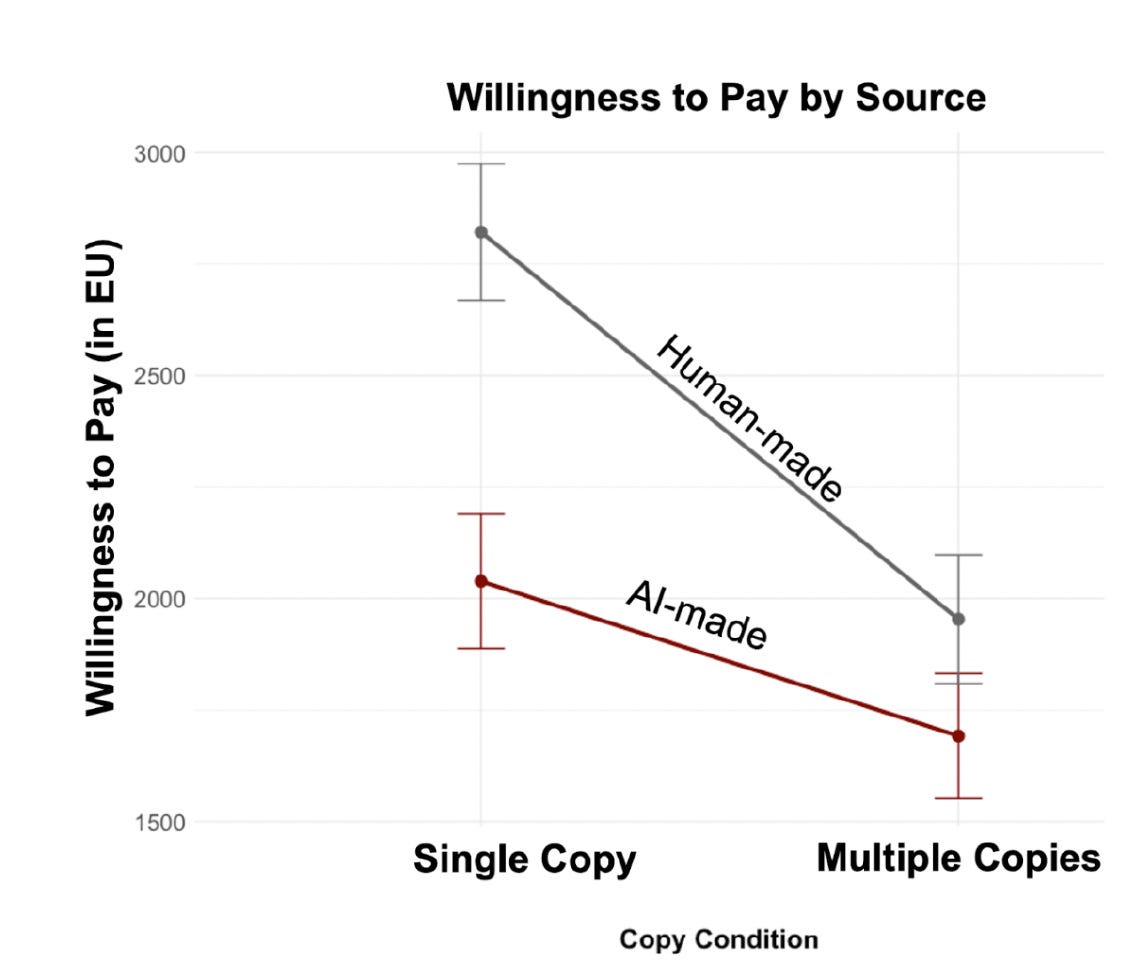

He supports his argument with experimental evidence. In one study, Alex and Graelin Mandel found that people’s willingness to pay for a product roughly doubled when others couldn’t buy it. Moreover, this exclusivity premium was cut in half when the product was produced by AI. Alex argues that AI products feel inherently reproducible, making them less valued.

Alex’s essay has received an overwhelmingly positive reception, but there has been some pushback. Philip Trammell argues that Alex ignores a crucial factor: that historically, most of our additional wealth has been spent on entirely new categories of products. Despite centuries of sustained growth, spending on ‘human performers’, as he puts it, remains close to zero. He argues that this pattern will continue in the age of AI, with new goods and services diverting spending away from ‘human-intrinsic goods’.

Alex responds that Philip’s focus is too narrow (quotations have been lightly edited for clarity):

I do not think performers are the right proxy for the category I am describing; I think the example of performers in general here is a red herring. My argument is not that the future economy is mostly about art, performance, or authenticity goods in the narrow sense . . . the relevant category is much broader.

Here’s the full list, from the essay itself:

The durable jobs will be in the relational sector, where the human element is the product itself.

Some already exist and are growing: nurses, therapists, teachers, boutique fitness instructors, personal chefs, bespoke tailors, craft brewers, live performers, spiritual guides, childcare workers, and many varieties of hospitality and care work. Others are emerging: experience designers, human-AI collaboration artists, provenance certifiers, community curators. Many haven’t been invented yet, just as six out of ten jobs people hold today didn’t exist in 1940.

Trevor Levin quote-tweets a screenshot of this list, arguing that many of the jobs aren’t as relational as they seem:

Extremely unclear to me that ‘the human element is the product itself’ for almost any of these examples.

Trevor’s point is that we’re more interested in quality for its own sake than Alex suggests. We wouldn’t stick with human-made products if robot beer and chatbot therapy were better and cheaper.

In the comments, Alex clarifies that he doesn’t see all these jobs as purely relational:

In many of these sectors, a large share of what is currently bundled together as ‘services’ consists of tasks that are not themselves relational, and those tasks may well be automated away. But that does not imply the disappearance of the job category. My argument is that the remaining relational component will become the main value proposition of those jobs, and these services will now provide a higher-quality product since people can focus on the relational aspect of the job (see the O-ring model for why).

This is an interesting argument, but I’m not sure it shows that there will be many relational jobs. Let’s look at Jason Abaluck’s succinct yet thorough critique:

I liked @alexolegimas’s essay too, but I think the argument smuggles in a lot of likely false assumptions about the stability of preferences in a wildly different environment.

‘Chefs, tailors, craft brewers, experience designers’ are all jobs which are valued principally for their outputs. These jobs won’t exist at large scale in a future of full automation where machines can make any food essentially free.

People in the status quo value art more if a person made it, but that will change in a world where machines have all the capabilities of people and more. It is an equilibrium outcome, not a permanent law of nature (LLM today = your desktop, not a brilliant companion).

Things like live performance and sports would still exist, but these are niche. The world of the essay might persist for a few years during a transition period but I think it’s not a stable outcome.

And this is just about how humans would change organically when the equilibrium changed – once we are in a world where most jobs are in the ‘relational sector’, we are also likely in a world with machine gods who can alter human biology.

With full automation, people won’t have jobs and that’s okay – they won’t need jobs, and they’ll find other sources of value in their lives. Maybe they’ll link up with the machine intelligences or use far-future drugs to achieve previously inconceivable experiences.

Essentially, Jason gives three arguments:

Many of the jobs Alex lists have a smaller relational component than he suggests (see Trevor).

A small fraction are indeed purely relational, but since superhuman AI would dramatically shift consumption patterns, demand for those jobs could still collapse (see Philip).

Superhuman AI could reshape the human brain, drastically reducing our interest in relational services.

I agree with all three, as well as Jason’s broader point: Alex describes a transition period, not where we’re eventually heading. This is a common issue in the debate on advanced AI. Many participants underestimate how deeply it would change the world. Jason’s argument about altered preferences might seem outlandish, but I think it’s entirely plausible. Witness the immense popularity of GLP-1 drugs, which change our preferences for eating. In my view, they’re a pale forerunner of what’s coming.

Why AI propaganda hasn’t been as effective as feared

In 2021, Daniel Kokotajlo wrote ‘What 2026 looks like’: an AI scenario predicting rapid progress and growing societal effects. Since it’s now 2026, we’re in a position to evaluate his predictions. Many people think he got a lot of things right, especially about technical milestones. But in an interview with Asterisk’s Clara Collier, he concedes that he overestimated the effectiveness of AI propaganda.

Clara:

You have this story that unfolds through 2024 through 2026 where there’s a vast increase in the production of AI propaganda, more sophisticated use of highly partisan AIs to create online filter bubbles, and much more AI-enabled censorship.

. . .

AI has been a hugely influential technology, but it has influenced the information environment a lot less than many people – not just you – anticipated. Of the people who were making bold predictions about AI in 2021, I think a lot of that was about misinformation and censorship. And it’s interesting that this angle, which even relative AI skeptics were concerned about, seems to have had less of an impact than many of us anticipated. Why do you think that is?

Daniel:

Normally when people ask me this question, I say that governments, corporations, political parties and campaigns, and other powers that be have been slower to adapt and push this technology for evil purposes than I feared they would be. But actually, I think that might not be true. I’m kind of confused and want to think about it more.

On a technical level, I think all the things I talked about – like the use of AI for A/B testing – are totally possible. I have heard anecdotally that some of them are being done. Someone at OpenAI told me that in their opinion, the product teams are basically training ChatGPT to maximize user retention and probability of upgrading subscriptions.

. . .

Maybe what I got wrong is that in 2021, it felt like a lot of tech companies were on the left explicitly and were putting their thumbs on the scales to influence discourse through their moderation decisions. It felt like if that trend continued, they’ll be using AI to do it in five years, and it’ll be more effective. And then I predicted there would be this counter-backlash because there are people who don’t like the left who want their own space, and so they would create their own spaces.

I agree with Clara that many people worried prematurely about the threat of AI propaganda and misinformation. In 2019, OpenAI cited these concerns as a reason for delaying the release of GPT-2.

People would have been wise to listen to psychologists like Hugo Mercier, who has long argued that humans aren’t nearly as gullible as the misinformation discourse suggests. And there’s the problem of getting people to listen to your propaganda, as he points out on the 80,000 Hours podcast:

There’s already an essentially infinite amount of information on the internet. So the bottleneck is not how many articles there are on any given topic, because there is already way more than anybody will ever read. The bottleneck is people’s individual attention – and that bottleneck is largely controlled by, to some extent peers and colleagues and social networks, but otherwise mostly by the big actors in the field: by cable news, by big newspapers. And there’s no reason to believe these things are going to change dramatically. So having another thousand articles on a given issue, just no one is going to read them.

Some of the predictions about AI propaganda have been psychologically and sociologically naive. Folk theories are often far too cynical about human capabilities and motivations.

This cynicism also leads many people to underestimate our epistemic norms. AI companies that expose people to mass persuasion would face a major backlash, meaning it’s in their interest to develop models that are broadly truth-seeking. Consequently, I share Dan Williams’ optimism about the epistemic impact of AI.

Experts don’t expect a robot expansion to transform the economy

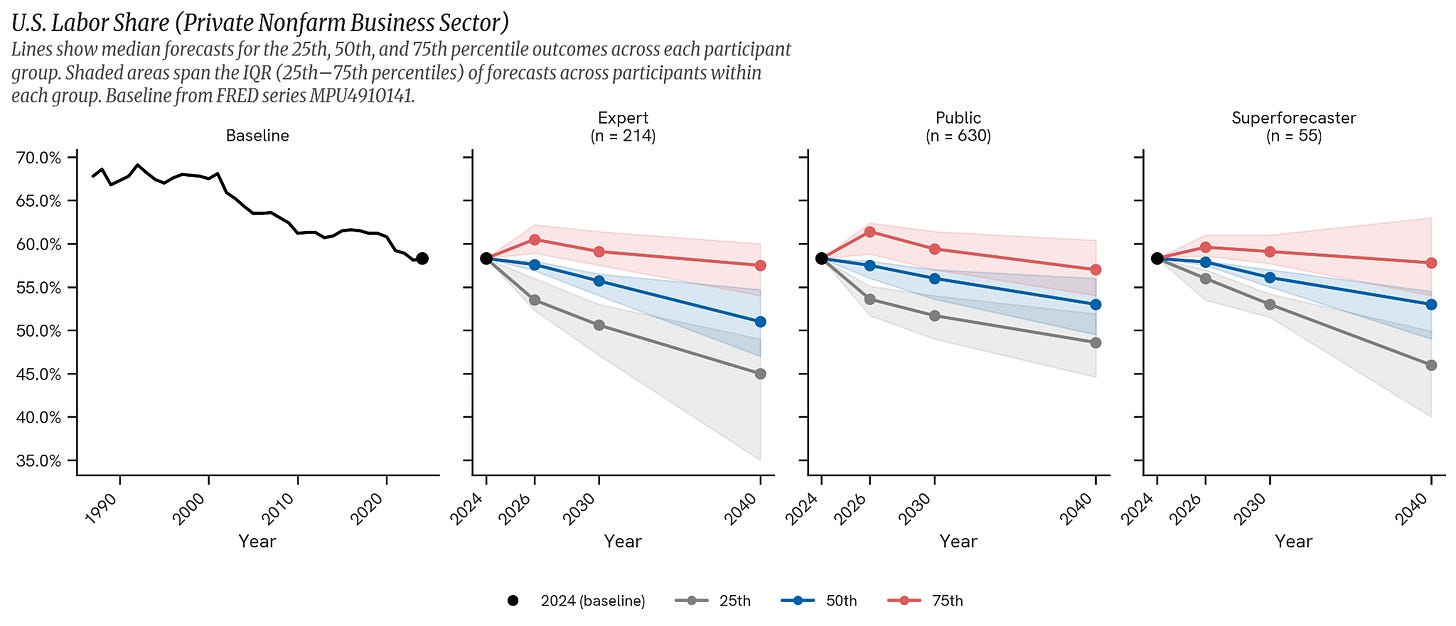

The Forecasting Research Institute has released a new set of surveys on the future of robotics, following up on its recent paper on the effects of AI on economic growth. On average, experts expect autonomous robots to pass the Coffee Test – reliably making coffee in an unfamiliar kitchen – by 2034, and to remove an appendix without human assistance by around 2040. They predict that the global industrial robot stock will more than triple by 2040, but don’t think this will have a transformative economic impact. Workers’ share of economic output is forecast to decline, but no faster than the historical trend.

In brief

Donald Trump says a deal between the Pentagon and Anthropic is still possible

Anthropic introduces identity verification for certain use cases

Oliver Habryka on ten non-boring ways he has used AI in the last month

Democrat Alex Bores launches plan to incentivize hiring humans and pay workers displaced by AI

Benjamin Todd argues that AI agents are unlikely to be aligned by default

Unauthorized users have accessed Anthropic’s restricted Mythos model

Eliezer Yudkowsky and Nate Soares’ If Anyone Builds It, Everyone Dies (September 2025) has sold 150,000 copies in English alone

I agree with your top line point about being open to weirdness, but generally see most "AGI scene" people as being insufficiently economically aware and think they would strongly benefit from moving closer to Alex's position.

> Essentially, Jason gives three arguments:

> 1. Many of the jobs Alex lists have a smaller relational component than he suggests (see Trevor).

> 2. A small fraction are indeed purely relational, but since superhuman AI would dramatically shift consumption patterns, demand for those jobs could still collapse (see Philip).

> 3. Superhuman AI could reshape the human brain, drastically reducing our interest in relational services.

I don't really buy 1, and discussed with Trevor a bit here: https://x.com/herbiebradley/status/2046352086313668865. I haven't heard a satisfactory counterargument to the point that the AI-only product has to compete with AI + human, so the quality baseline is raised anyway. For therapists, don't you basically have to assume that there are no inherently-human tasks in the job of "therapist" to suppose that AI can be a full substitute (implicit in saying "better")? It's an *adjacent* product which fills some of the market demand but does not substitute.

On 2, not convinced it's a very small fraction. But on collapse, Alex's quote here directly argues against Phillip: "Many haven’t been invented yet, just as six out of ten jobs people hold today didn’t exist in 1940.". He's arguing that part of the expanding varieties is the expansion in relational service jobs. I haven't yet seen a good argument against the reasonable point that we should expect many more new such jobs to appear!

On 3, interesting take but seems far far too uncertain, even as someone AGI pilled, to really incorporate into a model here.

I don't think my intuition here rests on Alex's suggestion that many white-collar jobs have a core and underappreciated relational component, but I also think that's probably true and those who haven't worked in a large bureaucracy and significantly interacted with internal politics wildly, wildly underestimate it. Sometimes you can directly see the issue: people who have only ever worked as SWEs or AI researchers actually think that most jobs are highly intelligence loaded.