Why does the US take AGI more seriously than Europe?

Plus: American cities see fewer births, most YC founders are under 25, and more

AI and economic growth, continued

The debate about the economic impact of AI continued over Easter. Quotations have been lightly edited for clarity.

Tom Cunningham:

As far as I can tell, every coauthor on the FRI econ-impacts-survey who’s tweeted about it thinks the respondents are underestimating the impacts of rapid capabilities growth.

Itai Sher gave a critical response:

So now we do surveys and the methodology is if the authors (actually just the ones who tweeted about it) think the survey respondents underestimated something, we treat that as evidence that they did?

Tom:

I’m not dismissing the survey results, but the observation from these authors seemed notable. I personally thought most of the respondents are misinterpreting the questions, or are mistaken, and then it was notable to me that four authors of the paper publicly stated they felt the same way.

As I mentioned on Thursday, I also wonder whether respondents fully understand and accept the scenario with rapid AI progress. It’s hard to survey people on hypothetical scenarios with so many moving parts.

There has also been further debate on the object-level question: how fast growth will be. Andrey Fradkin:

I am more optimistic than my fellow economist forecasters about annual GDP growth by 2050. That said, even my forecast is not as high as the >10 percent forecasts that some are predicting. A few reasons why:

The spread of AI capabilities is greatly increasing variance (which includes substantial weight on the negative scenarios including war and revolution).

It’s likely that AI will cause a greater decoupling of apparent progress and GDP, so that focusing on GDP will miss something.

A lot of economic bottlenecks in the US are regulatory, and in many states of the world, it will take more than 20 years for governance to adjust to let AI subsume these.

Healthcare is a large share of GDP, and even though AI will drastically increase medical progress, we may still need RCTs before medicines are widely used and affect GDP.

Nonetheless, the possibility of extremely fast growth cannot be dismissed, and I place about 10 percent weight on these scenarios.

Andrey clarified that his forecast doesn’t assume rapid AI progress by 2030:

Yes, this forecast is unconditional. I believe the capabilities in the ‘rapid’ scenario will almost certainly be achieved within 20 years, just not necessarily by 2030. In the survey, I didn’t take enough time to consider the conditionals (was lazy), but my weight on the > 10 percent annual GDP growth scenarios is much higher in that case (probably 30 percent probability). I still think that my points above apply. Will AI capabilities result in more housing in SF?

Just to push on this because I feel this is a critical ambiguity in all this discussion: if you *literally* believe that AI can do all intellectual work, including robotics, then things get crazy, right? Like outputs of goods and services will double every year? Housing in the Bay Area seems totally irrelevant somehow. Maybe I need to write something more concrete about this.

Does GDP make sense in that world? Housing is a major component. I still think humans will want to live in SF. But would love to read anything you write on this topic.

Rather than talking about GDP we could consider, e.g., energy production or production of robots in that world (though I think GDP would still likely be meaningful and grow enormously like it did during the Industrial Revolution).

Jason Abaluck also had a thread about how transformative AI could make GDP a less useful metric of economic progress:

In worlds where you get full automation and a production explosion, GDP ceases to be a decent proxy for welfare. We should really be forecasting other metrics in those worlds.

. . .

If automation drives the price of many or most goods to zero, then GDP growth will be a very bad proxy for welfare growth.

If robots make a free pill that makes you live forever, GDP does not increase. If robots send whatever food you want to your doorstep for free, GDP does not increase.

So really, forecasts about GDP in a world with complete automation are irrelevant. What matters is forecasts of welfare. Makes me think that next time I coauthor a paper asking partly about such futures, we should use alternative measures of growth!

This measurement issue is interesting, but I don’t think it explains why respondents predicted relatively muted economic growth. The fact that they thought that labor force participation rates would remain fairly high even in the scenario with rapid progress suggests they simply don’t expect a radically transformed economy by 2050.

Why does the US take AGI more seriously than Europe?

As a European, I’m struck by how much this debate is dominated by Americans. Almost all contributions I found came from people working in the US.

The same pattern holds in broader public discourse, as far as I can tell. In the US, the prospect of transformative AI is taken very seriously. Bernie Sanders recently said that ‘we are at the beginning of the most profound technological revolution in world history’. I don’t hear that kind of language from European politicians. While there obviously is a debate about AI here, too, it isn’t as ‘AGI-pilled’. Why is that?

AI adoption

One possibility is that the difference is driven by Americans’ greater use of AI. However, I doubt it’s the main explanation, since adoption rates are fairly high in some European countries, too.

AI leadership

Since all leading AI companies are from the US, Americans may believe they can shape how AI develops. By contrast, few hold this belief in my own country, Sweden.

Cultural openness to speculation

Another possibility is that Americans are simply more willing to entertain speculative questions. I think this may well contribute.

Safety nets

It’s also been suggested that a thinner safety net may make Americans more worried about AI leading to job losses. But my sense is that this factor is somewhat exaggerated, since unemployment hurts in Europe, too.

Scale of public discourse

Lastly, the US is such a large country that there’s room to debate a range of issues at the same time. Since European countries are much smaller – and since there’s no pan-European public discourse – a topic like transformative AI can more easily be sidelined.

There may be further factors I’ve not considered. Please comment if you have a view.

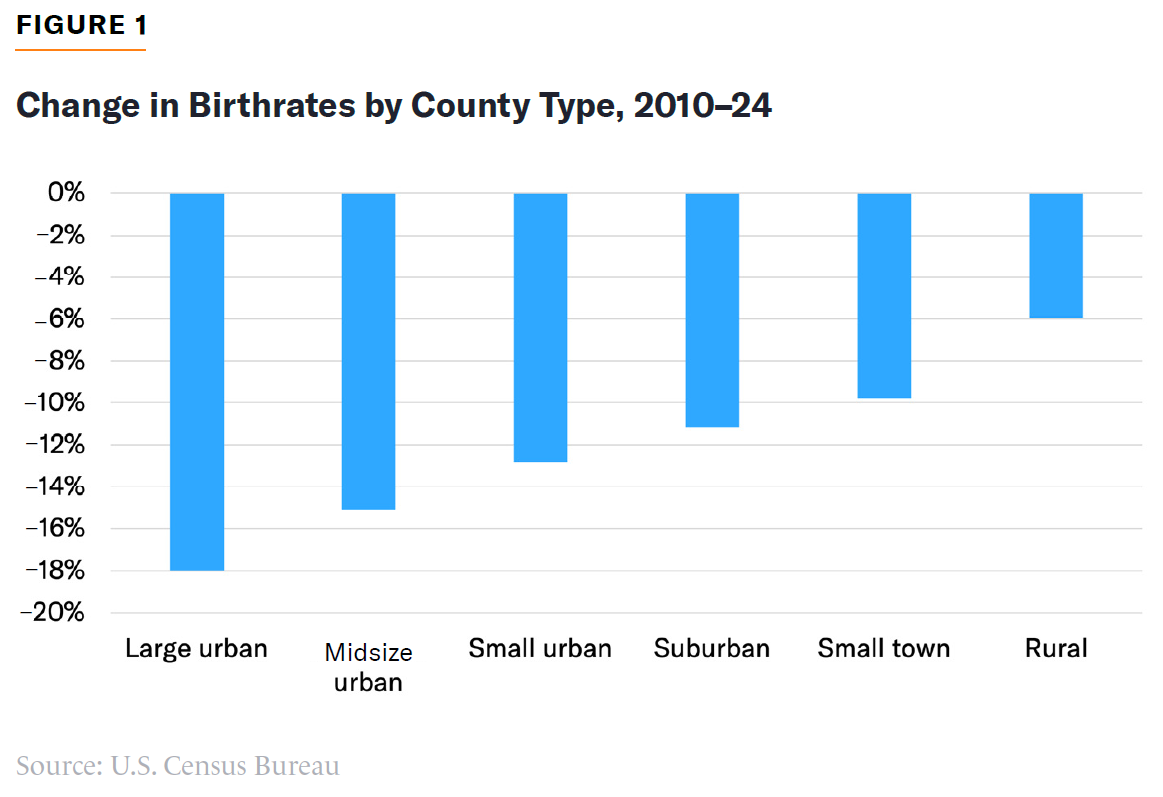

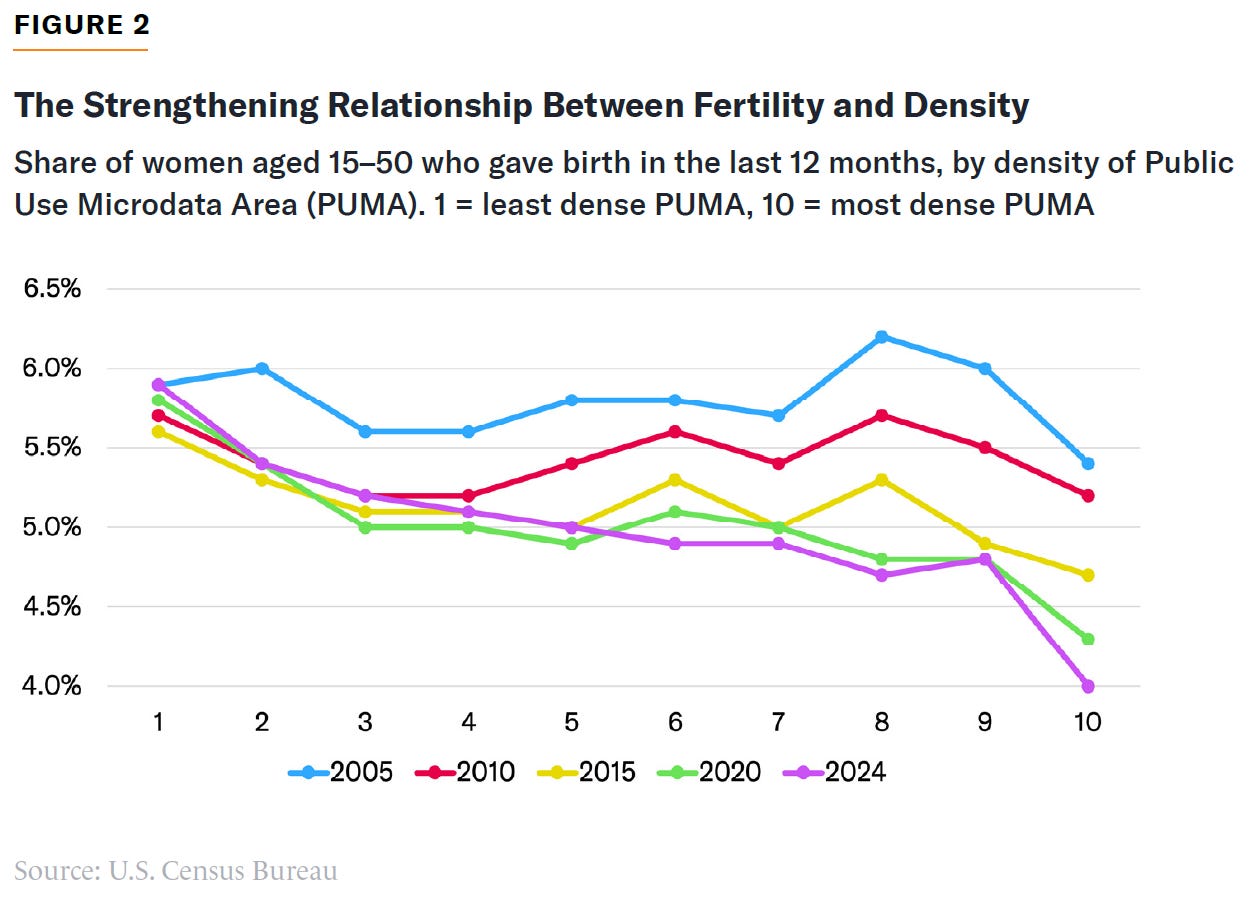

American birth rates are falling much faster in cities

The recent fall in American birth rates is disproportionately an urban story.

The relationship between fertility and density was weak as recently as 2005, but since then it has grown much stronger.

In some cities, the trend toward fewer children has accelerated since Covid. Manhattan had 19 percent fewer 0–4-year-olds in July 2024 than it had in April 2020.

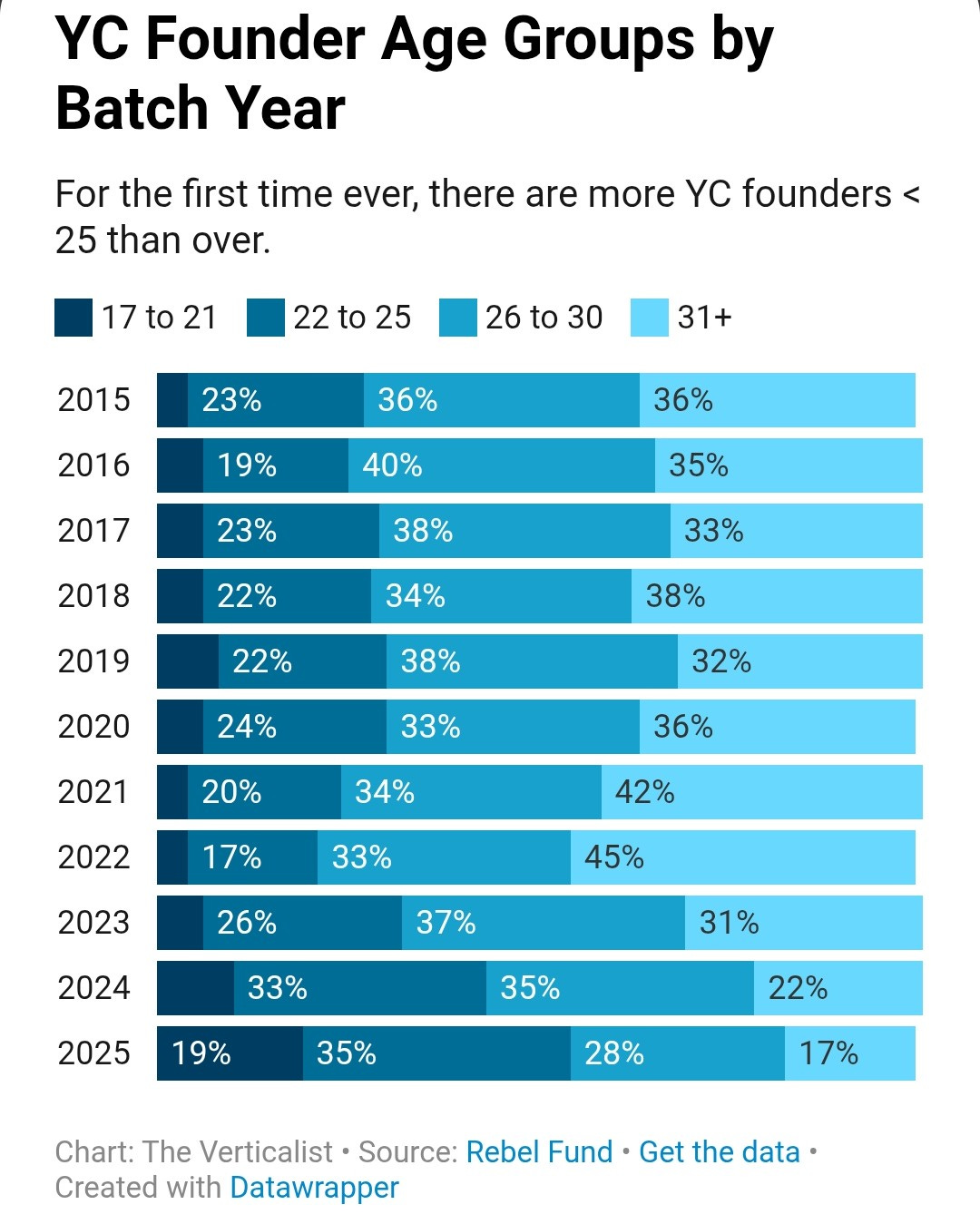

Most Y Combinator founders are now under 25

Startup founders in the elite incubator Y Combinator are much younger than just a few years ago, according to Rebel Fund estimates. This may be related to the rising share of AI startups.

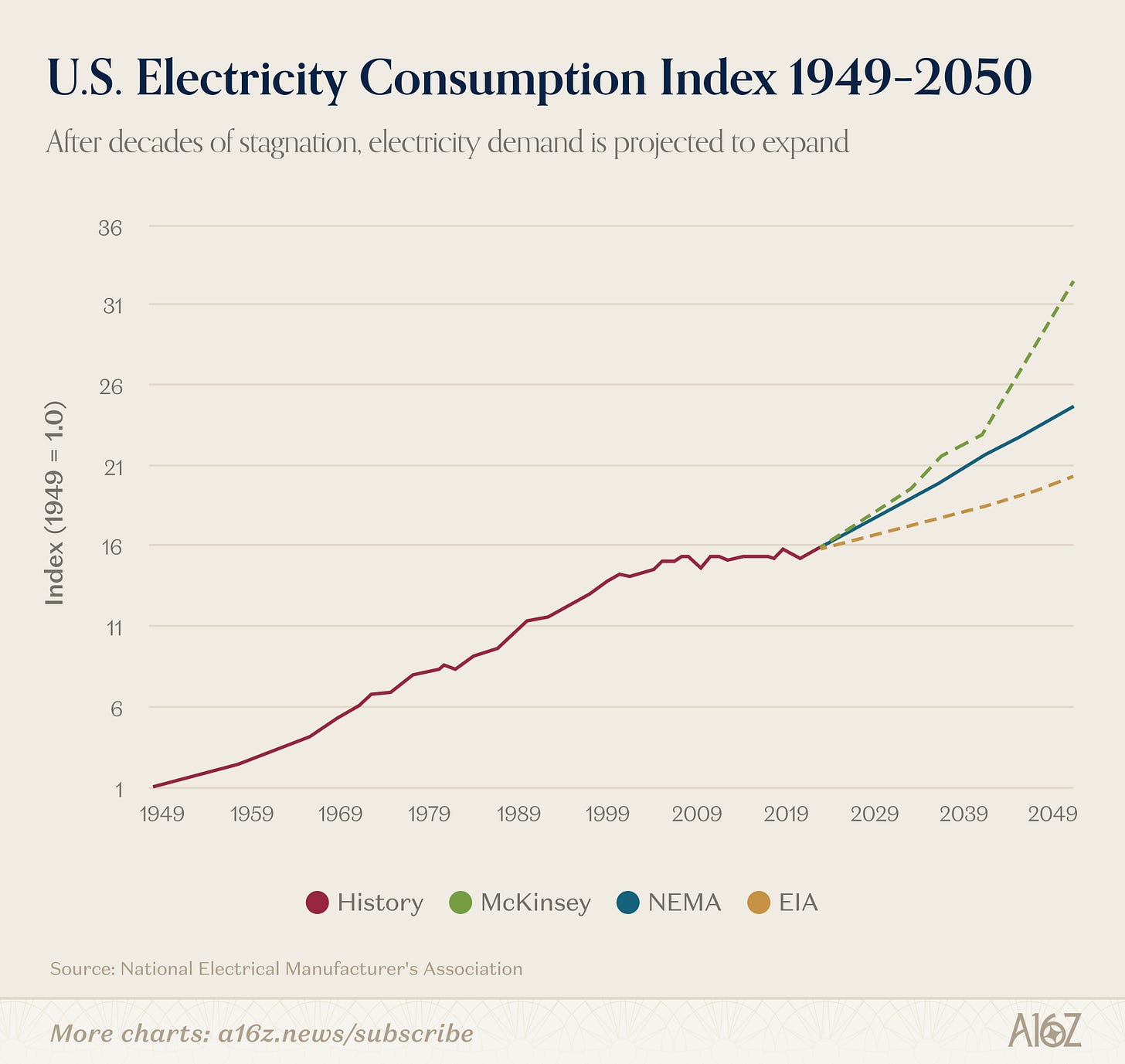

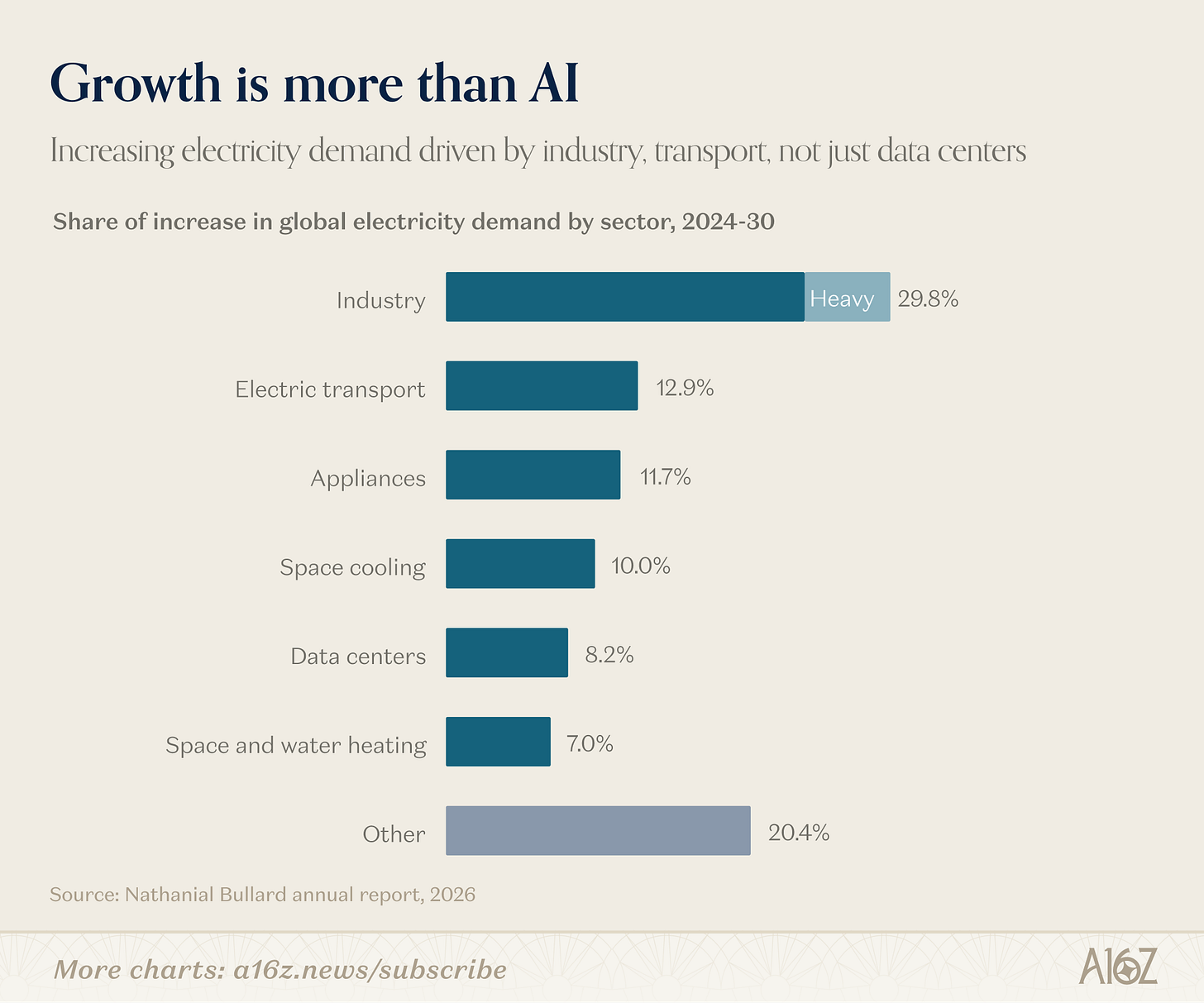

American electricity consumption is expected to rise

Several forecasts predict that American electricity consumption will increase again, after having remained flat for many years.

You might think it’s all about AI and data centers, but actually, a broad range of sectors are expected to contribute.

In brief

Saloni Dattani on why she’s skeptical of accidental discoveries. (See also the good discussion in the replies.)

Polymarket gives Viktor Orbán’s Fidesz a 31 percent chance of winning Sunday’s Hungarian election

The Burn Selection Hypothesis: humans may have adapted to fire injuries

Daniel Kokotajlo and Eli Lifland shorten their (already short) timelines to advanced AI

Helen Toner argues that the term ‘artificial general intelligence’ has become almost useless

Two METR researchers present a model of how AI could accelerate its own development

Contamination from laboratory gloves may have inflated some estimates of microplastic prevalence

I think one reason AGI discourse is more prominent in the US is due to contingent founder effects. The rationalist community has been very influential on US tech culture in particular, and it was founded by one of the original AI doomers. I believe Jasmine Sun has said that AGI discourse also isn't very prominent in China in her reporting, so I think the anglosphere is more of the exception here than Europe.

US employees are changing jobs at X10 the rate of Europeans I read recently? Obviously with such job fluidity, one can easily imagine jobs evaporating