How much will AI increase economic growth?

Plus: Europe replaces American aid to Ukraine, philosophy has grown more empirical, and more

Climate change has different drivers over the short and long term

In brief: the history of instant coffee, Iran increases repression, and more

How much will AI increase economic growth?

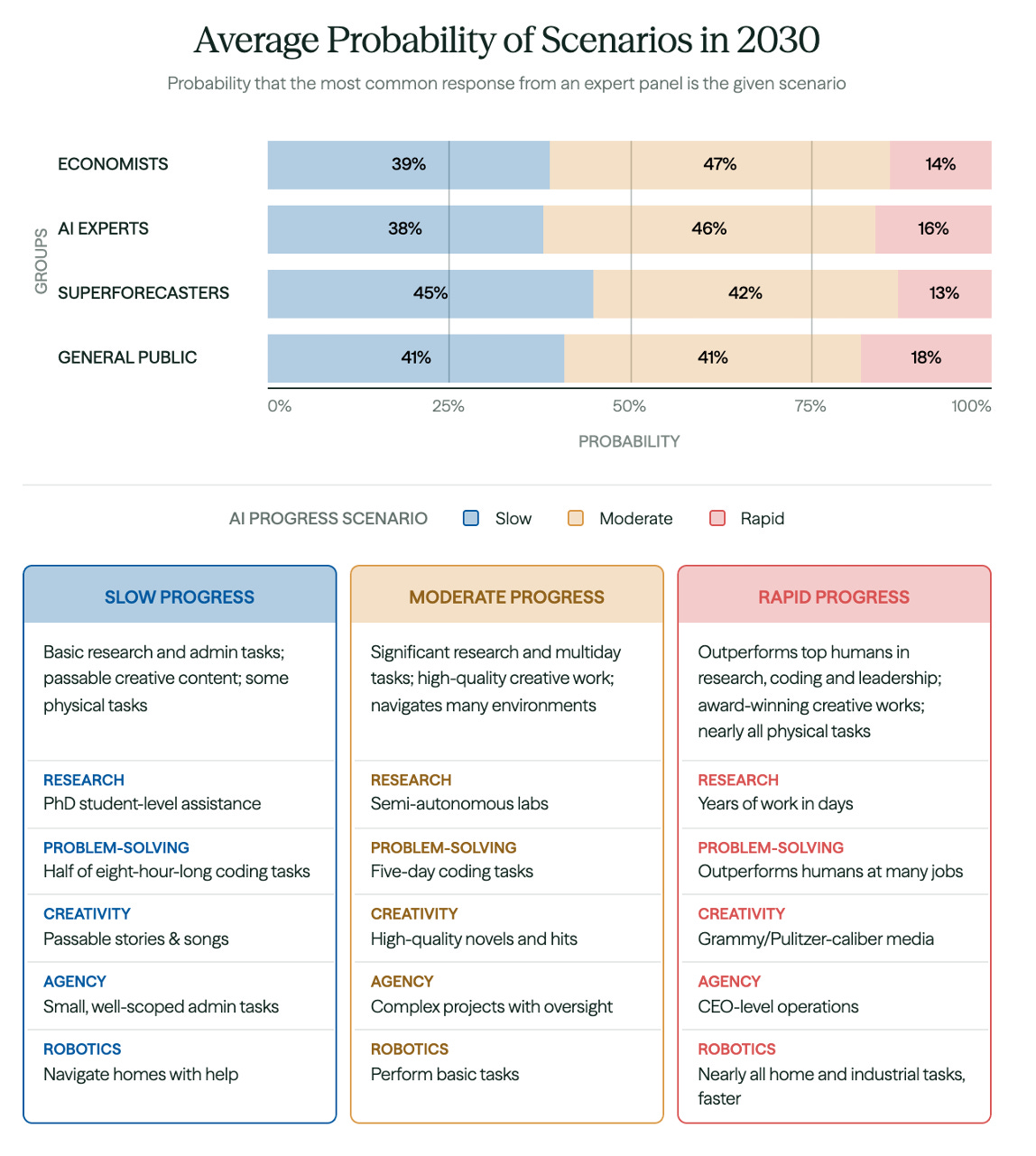

Elon Musk and Dario Amodei predict that AI will transform the economy within the next few years. But a new paper from the Forecasting Research Institute finds that most experts disagree. By 2030, they expect AI progress to be slow or moderate.

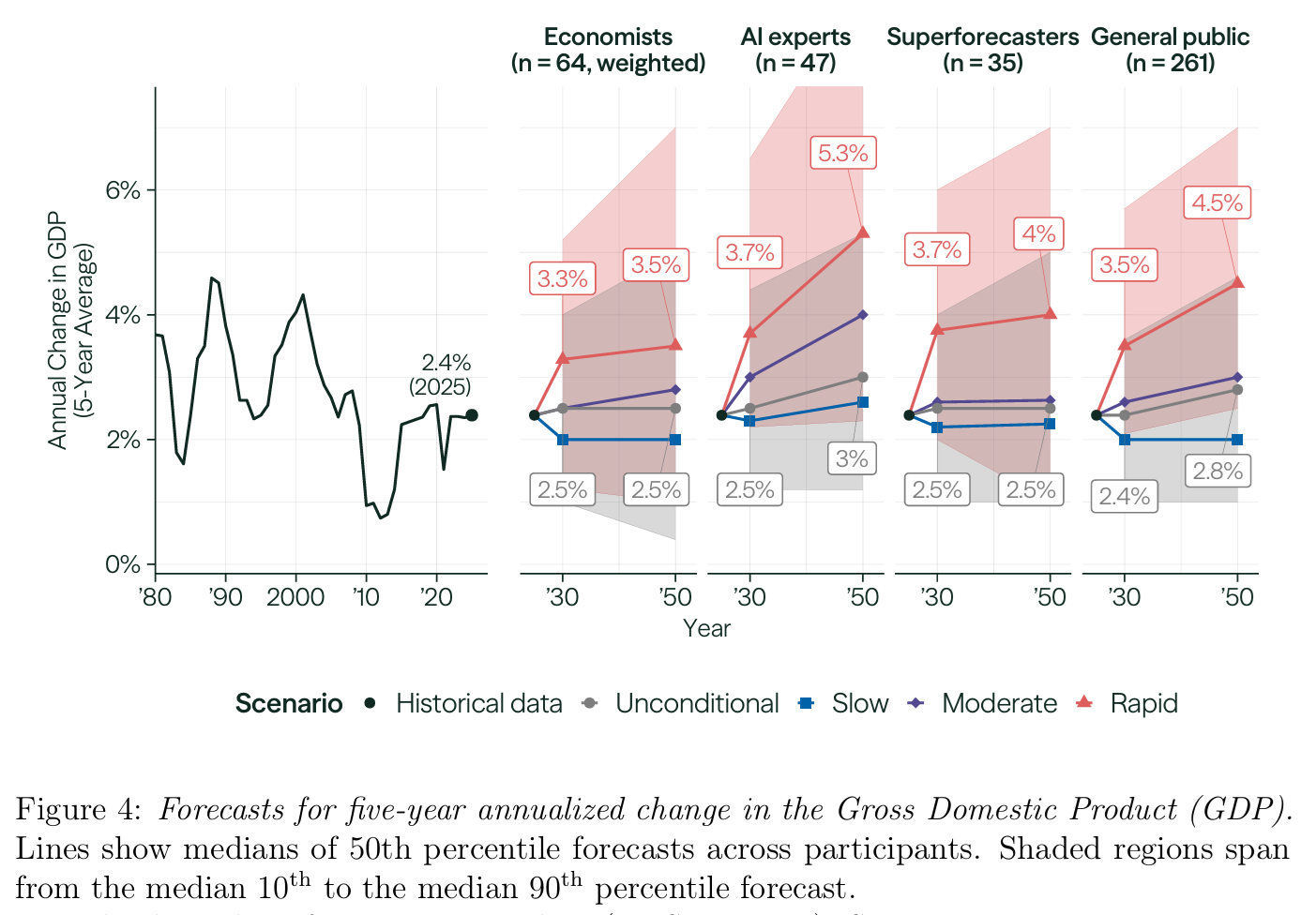

And while they predict that growth will be higher if AI progress turns out to be more rapid, they don’t think it will take off dramatically. The economists are the most conservative:

This paper triggered a lively debate on X. Quotations have been lightly edited for clarity.

This is a great paper but contains a puzzle: forecasters expect even if we automate most labor and wait 20 years, GDP will only increase by 45 percent.

I would love to hear how people are thinking about this.

. . .

Specifics: the median prediction for 2025–2050 GDP growth with ‘rapid’ progress is 1.5 percent above the slow scenario, meaning that after 20 years of diffusion and AI progress GDP will increase above trend by 45 percent (=1.015²⁵).

Some comparisons for a 45 percent increase in GDP per capita:

This is roughly the difference between US and Canada in GDP per capita

This is the growth the US would typically get in 15 years

This is the growth that China had in 5 years (over 1992–2019)

. . .

Some explanations:

Diffusion takes centuries, not decades.

Forecasters expect that there will still be thousands of critical tasks which AIs cannot do, even in 2050, but then it seems there’s an ambiguity in the scenario.

Powerful AI causes counterbalancing effects pushing GDP down – e.g. war, or disengagement from the market (but then would expect confidence intervals to explode further).

Basil Halperin, who coauthored the paper, also finds it puzzling that respondents don’t expect rapid AI progress to lead to higher growth. He tries to steelman them, but finds both of his attempts unconvincing:

Yes bottlenecks will slow progress, this is extremely important. But the rapid scenario under consideration has already chewed through most bottlenecks.

Political backlash + regulation could prevent rapid capabilities progress from accelerating GDP.

But again, in the rapid scenario, it’s plausibly too late. Also, to my understanding, this wasn’t a common justification (underrated IMO).

Arvind Narayanan thinks that the backlash will be powerful:

Many people seem surprised by the prediction in this report that even rapid AI progress won’t lead to explosive GDP growth. Couldn’t AI be different from past patterns?

In my view the fundamental barriers aren’t technological or even regulatory but social. Explosive economic growth implies a pace of social change that I predict the public in most countries will vehemently reject.

Take self-driving cars. Assuming continued rapid AI progress, there will soon come a time when it would be great for growth if you could get human drivers off the roads. Freed from the need to accommodate the limitations of human drivers, roads could be dramatically redesigned and land use patterns transformed.

But my head hurts even trying to imagine the backlash. I think banning human driving will remain completely outside the Overton window no matter the daily death toll. The actual debate we’re having now is whether to allow self-driving cars at all.

AI boosters are right that regulation is not a fixed constraint, and that if AI is powerful enough, it will be a force for change. But they get the direction wrong! It is true that regulation is largely a reflection of social preferences, but those preferences tend to be even more strongly opposed to rapid change than is reflected in policy at any given time. As AI-driven social change kicks in, we will see regulation start to change – in the direction of erecting more barriers, not tearing them down.

If your mental model of AI impacts starts and ends with capability growth, you’ll miss the layer that actually matters. Far from AI being a wild west, the diffusion layer is already highly constrained, and it’s quite possible that those constraints will tighten.

Economist Paul Novosad:

Economists and AI experts have very similar forecasts on what AI will be able to do in 20 years.

But the AI experts think it will have a much bigger effect on the economy than the economists do.

Why? Because economists study this stuff.

Self-driving cars have been considerably safer than human drivers for about 3–4 years. How many people have access to self-driving cars? Even 20 percent penetration is years and years away.

Adoption is slow, society doesn’t transform instantaneously, sometimes it doesn’t transform at all.

We still don’t have high-speed trains in the US and we are banning windmills. Technology is a constraint but it’s far from the only one.

Fellow economist Alex Imas responds:

My worry is that economists’ forecasts of AI’s impact on growth is colored too much by historical precedent. Historical precedent is important, but we should be humble about the possibility of AI being more transformative than prior technologies.

For example, the speed of transformation is at least partly determined by adoption and diffusion through the economy. If one’s model is based on AI being adopted within existing organizational structures, then diffusion will be quite slow – this is what we’re seeing now.

But consider the possibility of a ‘Coasean Singularity’ – a scenario where AI drives the transaction and coordination costs that traditionally dictate firm size to near zero. This could lead to the (potentially fast) emergence of smaller, more nimble AI-first firms, new types of organizations that are outside of our current models, that don’t have historical precedent. These firms will not have the sort of bottlenecks of traditional firm structure, and the transformation and resulting impact on economic growth would be much closer to the technological frontier.

I know that many economists are already thinking through these transformative scenarios. My guess is that as these ideas are developed further, forecasts will change as well.

I think this debate is excellent. What should we make of it? Why don’t people expect higher growth under the rapid AI progress scenario?

I agree with Basil that belief in a political backlash is unlikely to be a major driver. People often fail to fully account for this factor. Instead, I think they simply believe that integrating AI into existing workflows will be painful, as it is today. But it’s not obvious how to square this with the powerful capabilities the rapid progress scenario assumes. As Basil points out, many of the current bottlenecks should no longer apply given this scenario. You don’t need to worry as much about workflow integration when AI can autonomously do complex jobs end-to-end. Here’s part of the paper’s description of the rapid progress scenario:

A single AI agent can generate a Pulitzer (or Booker Prize-) caliber novel according to current (2025) standards, adapt the book into an engaging two-hour movie, negotiate the resulting book and movie contracts, and launch the marketing campaigns for both while its sibling agents manage the book publishing company and movie studio at the level of highly competent CEOs.

It could be interesting to study a limiting case: even more rapid progress, where AI quickly reaches superintelligence. Is there any scenario where people would expect ten percent growth or more? If not, one might suspect they simply don’t take these kinds of scenarios fully on board.

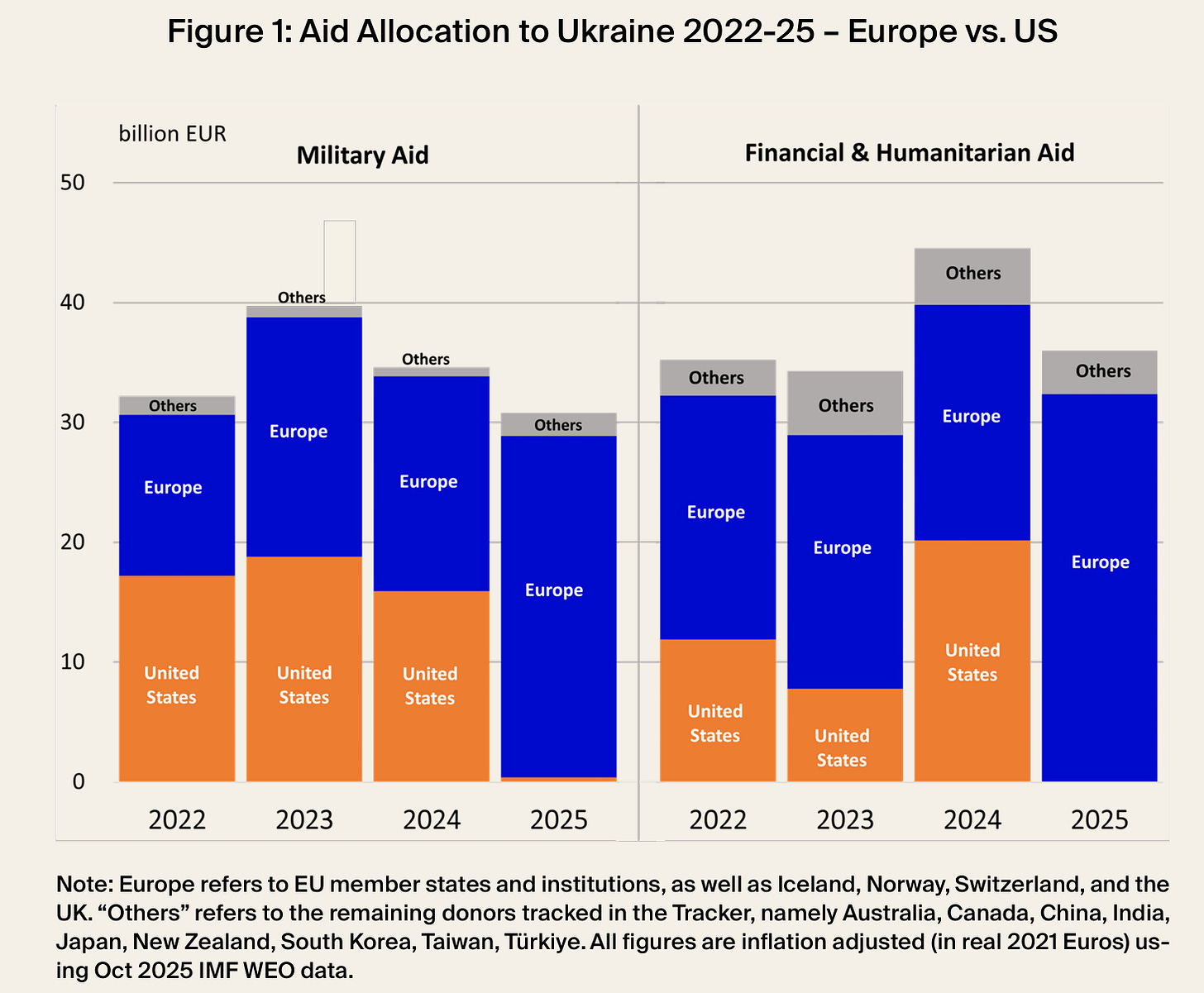

Europe replaces American aid to Ukraine

American aid to Ukraine has fallen to almost nothing, but as Europe has increased its support, the net decrease has been muted.

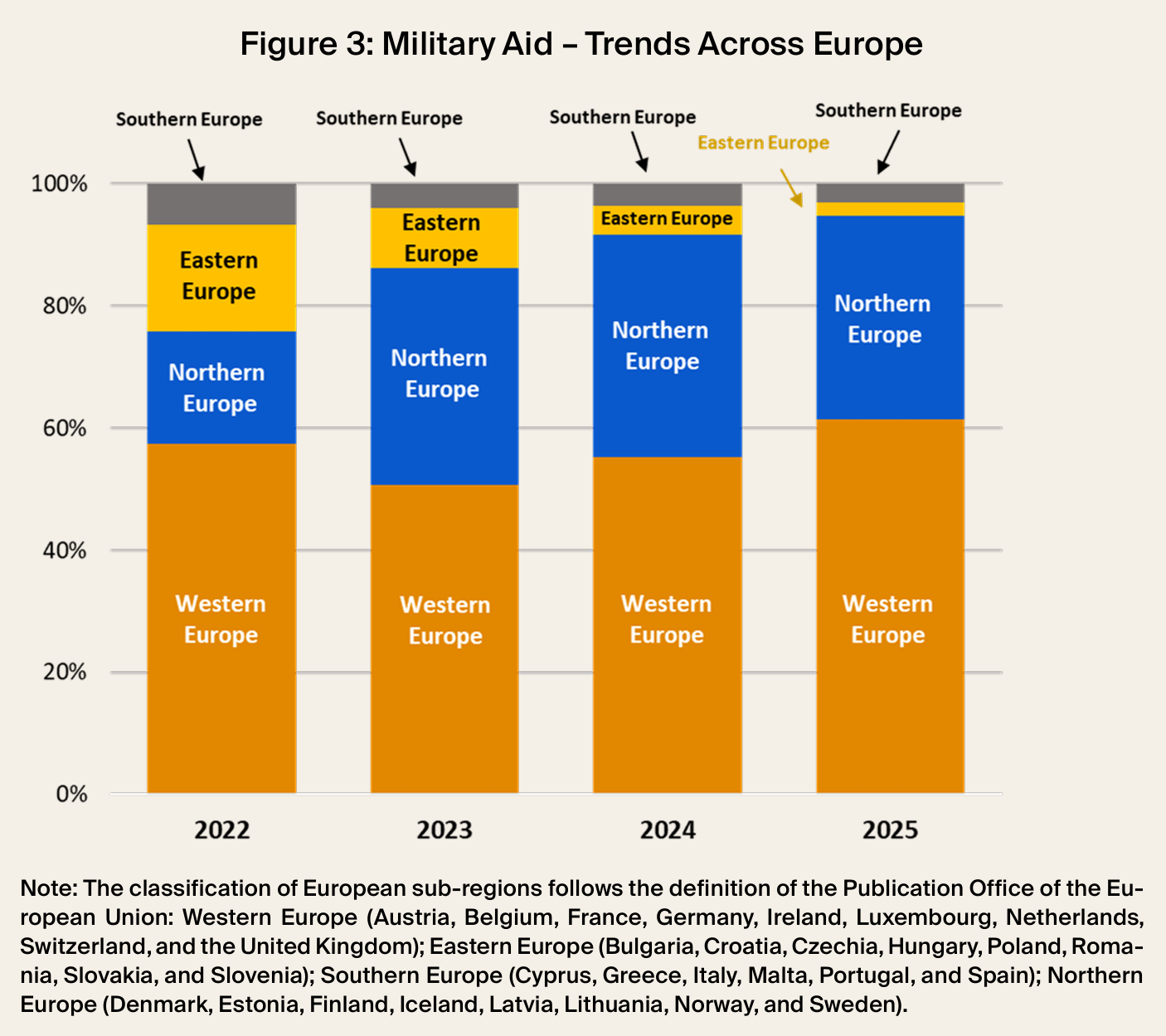

Financial and humanitarian aid mostly comes from the EU budget, but military aid is provided by individual countries. Some of them give much more than others. The Nordic and Baltic countries account for just 8 percent of the GDP of the tracked European donors, but provided 33 percent of military support in 2025. Conversely, Southern Europe has 19 percent of GDP but its share of military support was only 3 percent.

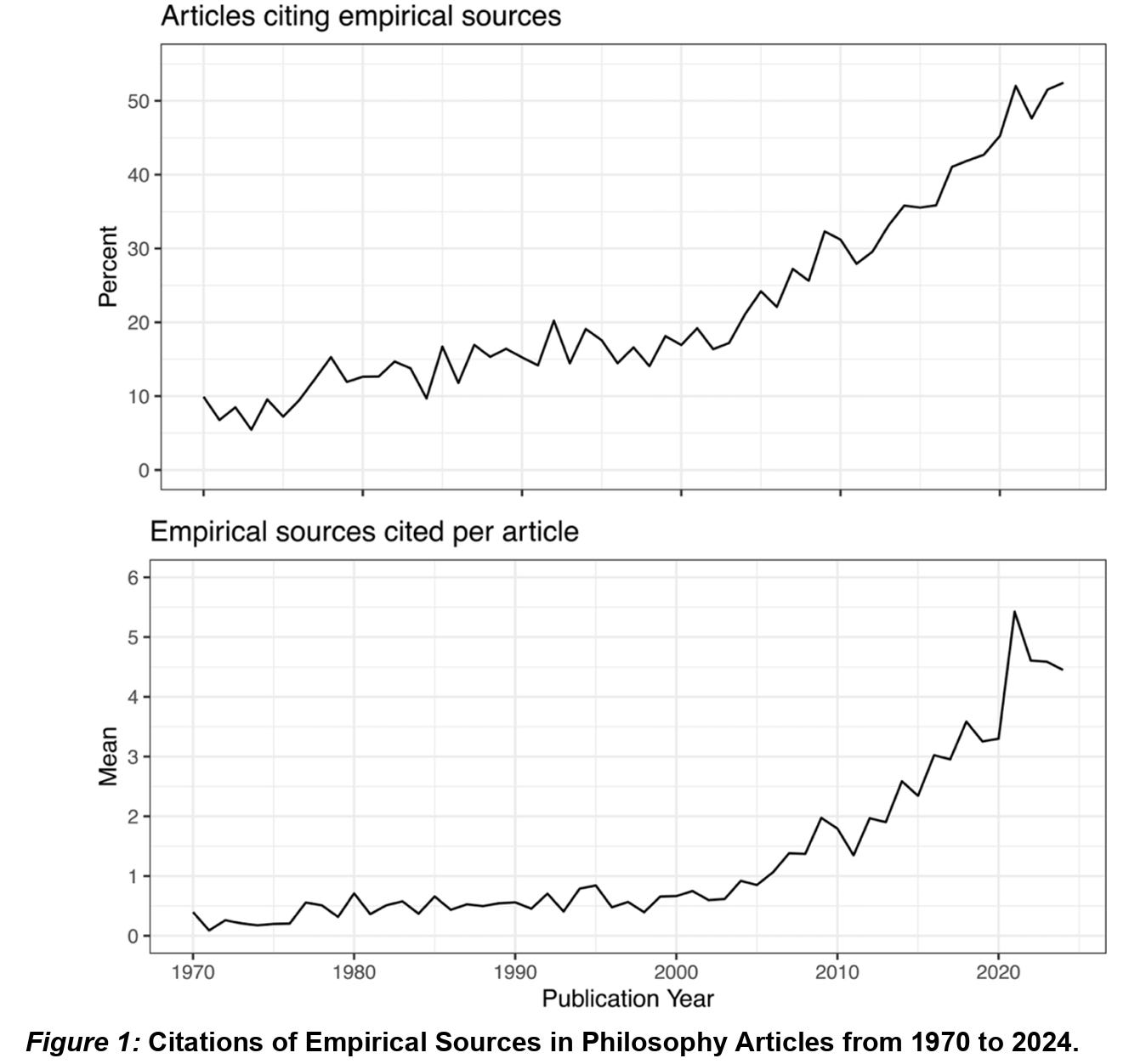

Philosophy has grown more empirical

Philosophy is often viewed as an armchair discipline that barely engages with empirical science. But in a new paper, Michael Prinzing shows that this view is increasingly outdated. In leading analytic philosophy journals, citations of empirical sources have risen substantially since the 1970s.

I think too many philosophers still ignore relevant empirical research, but this is a welcome change. Lagging curricula should be updated.

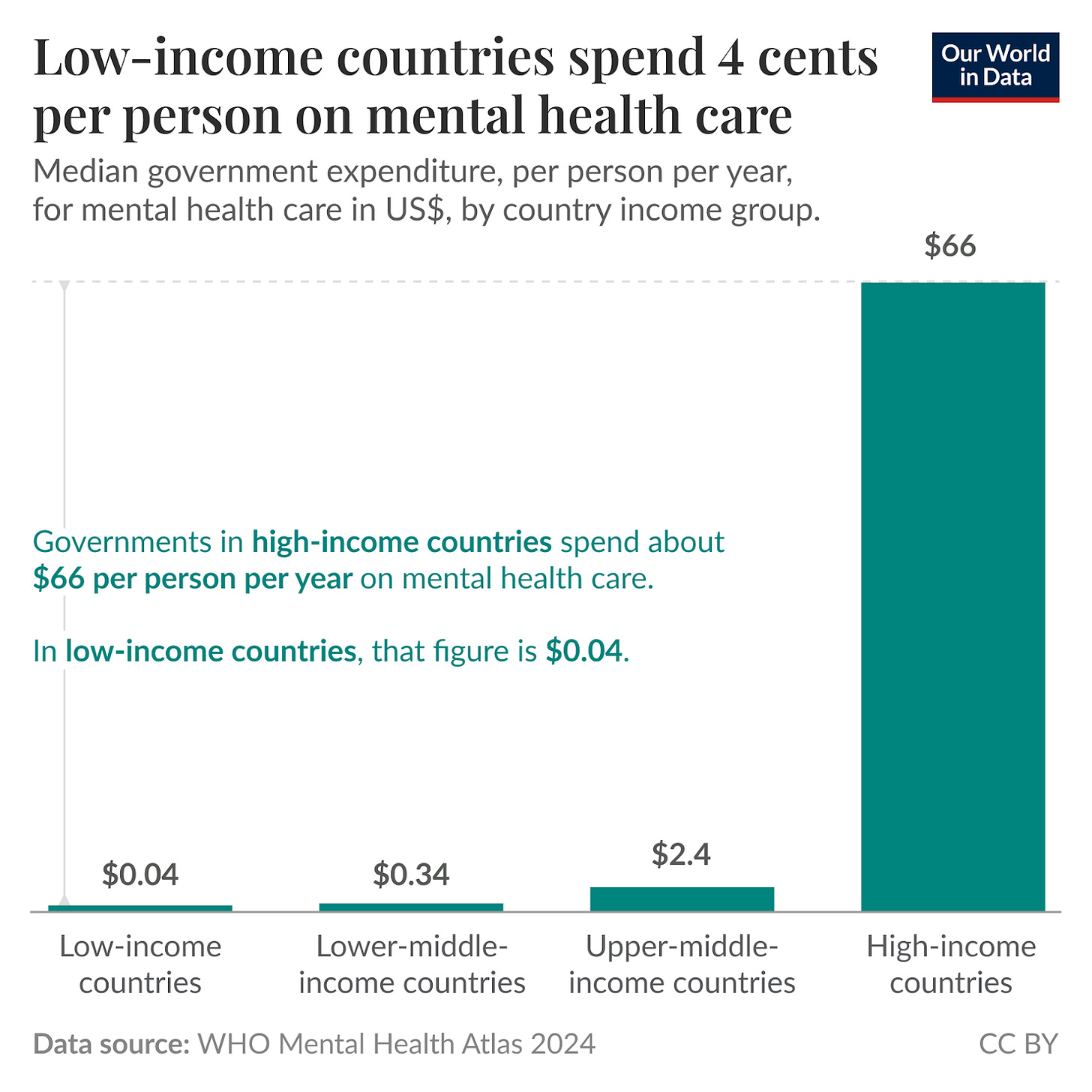

Poorer countries have very little mental healthcare

While richer countries have better healthcare across the board, the difference is especially striking in mental healthcare. High-income countries have roughly 70 times as many psychiatrists per million people as low-income countries. In terms of spending, the gap is even wider.

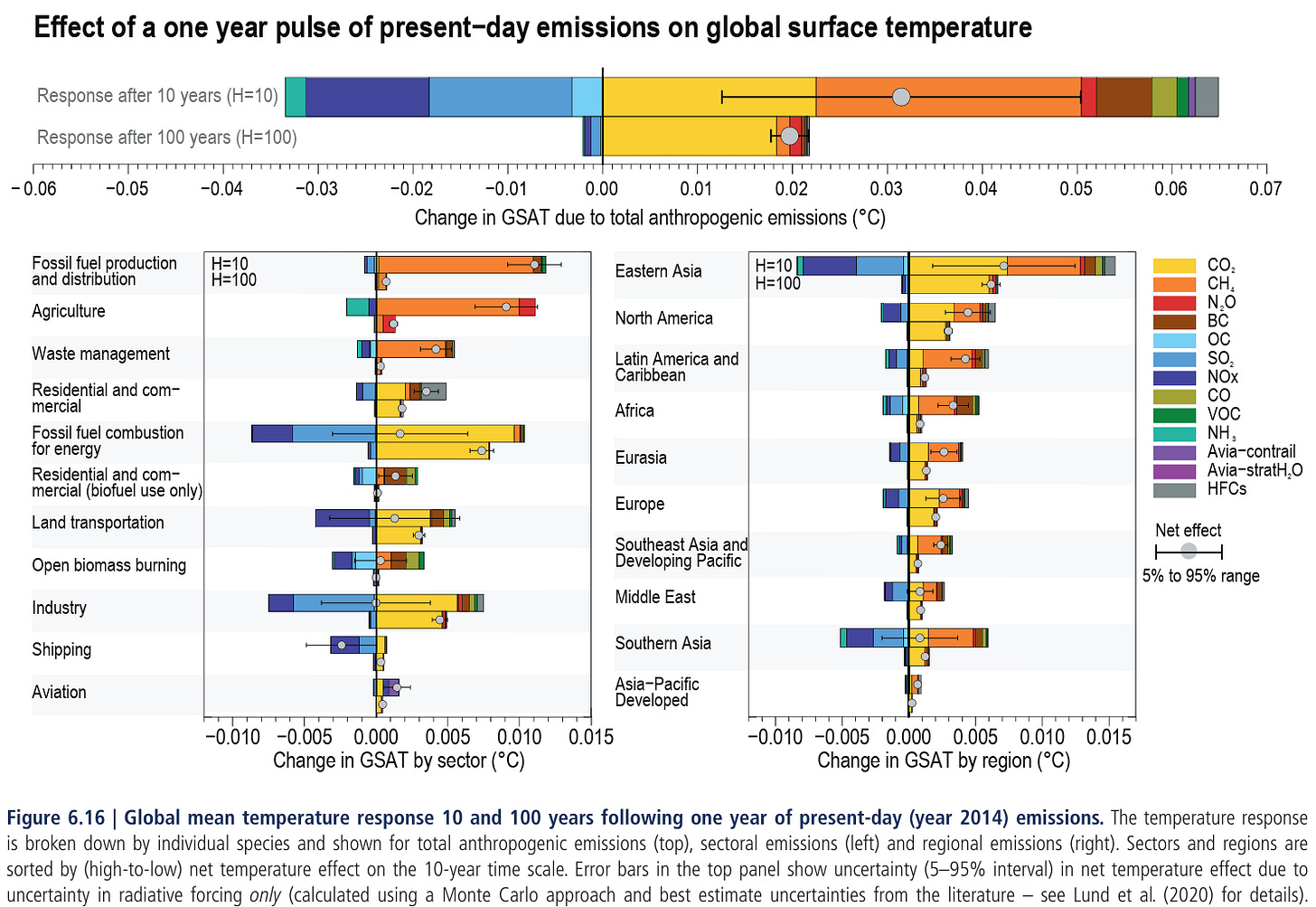

Climate change has different drivers over the short and long term

In public discourse, climate change is primarily associated with carbon dioxide (CO₂) emissions. But many other emissions also affect the climate, such as methane from agriculture and fossil fuel extraction.

Why then the focus on CO₂? Because most emissions break down within a few decades, whereas CO₂ persists. If you’re pessimistic about long-term climate prospects, it makes sense to prioritize CO₂ emissions.

Source: IPCC. Legend abbreviated.

In brief

Benjamin Stubbing and Oscar Sykes on why it proved so hard to make good instant coffee

Paper finds that noncompete agreements reduce American wage growth

Study suggests that reducing top income taxes would increase tax revenue in Norway

Ethan Mollick argues that the chatbot interface doesn’t realize AI’s potential

Luis Garicano and Jesús Saa-Requejo on how Europe could take the lead in AI adoption

Sam Harris interviews Will MacAskill on the ups and downs of effective altruism

David Oks on the rise of scientific citation metrics and the problems they cause

Robert Long argues that LLMs are neither mere parrots nor similar to humans

American literacy isn’t as poor as recent discourse has suggested

March newsletters from Coefficient Giving and the Institute for Progress

On Imas’ point we might already seeing novel firms of the sort he’s talking about: https://www.nytimes.com/2026/04/02/technology/ai-billion-dollar-company-medvi.html?unlocked_article_code=1.X1A.TZcY.c7G_whUqLFQY&smid=url-share

As to superhuman AI, I think Tamay Besiroglu and the people at OpenPhil (now Coefficient Giving) had papers that explored the possibility of explosive growth under circumstances like this. The arguments seemed counterintuitive but compelling.

I think some of those percentile ranges should just be considered mistaken? I agree that there are many considerations at play but, for example, the labour force participation rate question under (their incredibly strong definition of) rapid AI progress doesn't make sense.

I shared more information in a restack note, where I could format things properly. Would be interested to know what I'm missing.