Who should have power over AI?

The dispute between the Pentagon and Anthropic has sparked an intense debate

Welcome to The Update. After the Pentagon designated Anthropic a supply chain risk, a debate has broken out about what the relationship between the US government and AI companies should be. Many of the arguments stake out positions on power and regulation that are bound to get more attention as AI capabilities advance. Quotations have been lightly polished for clarity.

Background

From 2024, Anthropic had a contract with the Pentagon that included restrictions on mass surveillance and the use of fully autonomous weapons. The Trump administration demanded that those restrictions be removed, and when Anthropic refused, the Pentagon designated Anthropic a supply chain risk. Initially, some thought this could force Amazon and Google to cut ties with Anthropic and even cause the company to collapse. The Pentagon’s decision was widely criticized, including by the lead writer of the White House’s AI Action Plan, Dean W. Ball:

The US government just essentially announced its intention to impose Iran-level sanctions, or China-level entity listing, on an American company. This is by a profoundly wide margin the most damaging policy move I have ever seen the US government try to take (it probably will not succeed).

Democratic control

But Anthropic also has its critics. Stratechery founder Ben Thompson argues that Anthropic assumes a power it’s not supposed to have:

Who decides when and in what way American military capabilities are used? That is the responsibility of the Department of War, which ultimately answers to the President, who is elected. However, Anthropic’s position is that an unaccountable Amodei can unilaterally restrict what its models are used for.

Anduril CEO Palmer Luckey takes a similar view:

Do you believe in democracy? Should our military be regulated by our elected leaders, or corporate executives?

Palantir CEO Alex Karp is characteristically blunt:

If Silicon Valley believes we’re going to take everyone’s white collar jobs AND screw the military . . . If you don’t think that’s going to lead to the nationalization of our technology – you’re retarded.

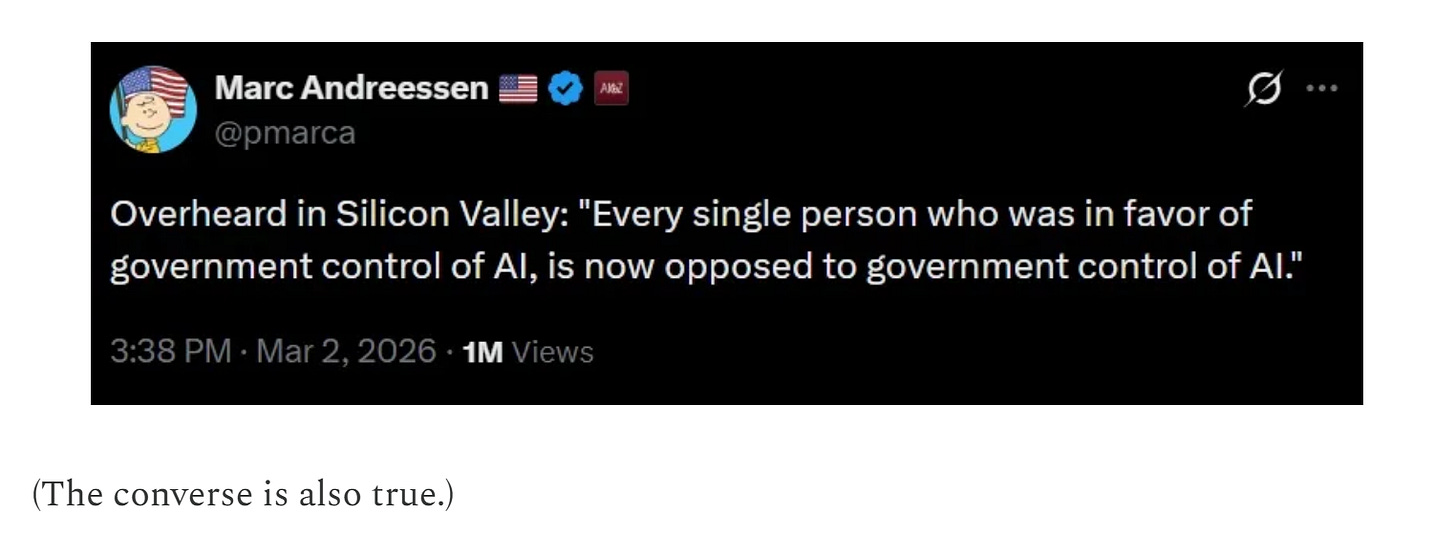

This debate has to an extent inverted standard patterns: left-wingers side with a private company, and right-wingers talk about democratic control. Noah Smith, quoting Marc Andreessen:

Some might say this shows that people are being hypocritical, but I don’t think it does. Consider Rutger Bregman, who has defended Anthropic forcefully:

This is the most important thing happening in the world right now.

The administration wants killer drones + mass surveillance of Americans.

Anthropic refuses to build it. While most tech companies fall in line, they are prepared to pay the price for their principles.

This is entirely consistent with thinking that elected governments should generally have more power than private companies. People don’t make judgments based on their general stance alone, but also consider the specifics of the question at hand. That’s not hypocritical but common sense.

Monopoly on violence

Anthropic CEO Dario Amodei has compared selling advanced chips to China with selling nuclear weapons to North Korea. Thompson now turns this analogy against him:

Why would the US government want to kneecap one of its AI champions?

In fact, Amodei already answered the question: if nuclear weapons were developed by a private company, and that private company sought to dictate terms to the US military, the US would absolutely be incentivized to destroy that company.

. . .

There are some categories of capabilities – like nuclear weapons – that are sufficiently powerful to fundamentally affect the US’s freedom of action; we can bomb Iran, but we can’t North Korea.

To the extent that AI is on the level of nuclear weapons – or beyond – is the extent that Amodei and Anthropic are building a power base that potentially rivals the US military.

. . .

It simply isn’t tolerable for the US to allow for the development of an independent power structure – which is exactly what AI has the potential to undergird – that is expressly seeking to assert independence from US control.

Noah Smith writes in support of Thompson’s argument.

This isn’t a question of law or norms or private property. It’s a question of the nation-state’s monopoly on the use of force.

To exist and carry out its basic functions, a nation-state must have a monopoly on the use of force. If a private militia can defeat the nation-state militarily, the nation-state is no longer physically able to make laws, provide for the common defense, ensure public safety, or execute the will of the people.

I think these arguments go too far. Whatever you think of Anthropic’s refusal to the Pentagon’s request, it’s not remotely akin to building a private militia. Setting conditions on how the military uses your products is categorically different from building military power of your own.

Regulation skepticism

Anthropic has also received criticism from the opposite direction. The company has long called for more regulation of AI to ensure that it is safely deployed. In some critics’ view, this is now coming back to bite them. Dwarkesh Patel:

The AI safety community has been naive about its advocacy of regulation in order to stem the risks of AI. And honestly, Anthropic specifically has been naive here in urging regulation, and, for example, in opposing moratoriums on state AI regulation. Which is quite ironic, because I think what they’re advocating for would give the government even more power to apply more of this kind of thuggish political pressure on AI companies.

Rob Wiblin responds:

Dwarkesh argues that AI safety regulation is risky because it hands wannabe authoritarians easier options for seizing control of AGI and permanently consolidating their power (among other reasons).

But he also appears to oppose state-level AI regulation, and indeed supports a moratorium on it.

If you’re worried about losing democracy then you should naturally be much more worried about federal-level regulation where the military and intelligence services are located and which no external actor can meaningfully check.

California or Texas or Florida creating their own safety office for frontier models actually distributes influence over AI more widely than it stands currently, and doesn’t easily lead to dictatorships that can’t be challenged or escaped.

So this actually seems to be an argument in favour of abandoning federal regulation and aiming to do things at the state level long-term.

I agree with that. Too much of the debate focuses on regulation in the abstract. This is a good example of how much the specifics matter.

You might also say that avoiding federal regulation now wouldn’t stop a bad actor cynically imposing and then exploiting them in future. But that’s a general problem with all arguments that say we shouldn’t do good things now because they’ll be turned to bad ends later.

If you don’t do good things now, the bad things can and often will be done later anyway.

I broadly agree with this, too, though I think there are some nuances. Since bad actors typically don’t have unlimited power, what legislation they can refer to can make a difference. The Pentagon might have been able to act even more forcefully against Anthropic if other legislation had been in place. Dwarkesh warns that ‘a purpose-built regulatory apparatus on AI’ could have this effect, though he doesn’t specify which particular regulations are liable to misuse.

Like nukes or the Industrial Revolution?

Dwarkesh also engages with the comparison between AI and nuclear weapons.

Ben Thompson had a post last Monday where he made the point that people like Dario have compared the technology they’re developing to nuclear weapons – specifically in the context of the catastrophic risk it poses, and why we need to export control it from China. But then you oughta think about what that logic implies: ‘if nuclear weapons were developed by a private company, and that private company sought to dictate terms to the US military, the US would absolutely be incentivized to destroy that company.’ And honestly, safety-aligned people have actually made similar arguments. Leopold Aschenbrenner, who is a former guest and a good friend, wrote in his 2024 Situational Awareness memo, ‘I find it an insane proposition that the US government will let a random SF startup develop superintelligence. Imagine if we had developed atomic bombs by letting Uber just improvise.’

In Dwarkesh’s view, this analogy is misconceived:

AI is not some self-contained pure weapon. A nuclear bomb does one thing. AI is closer to the process of industrialization itself – a general-purpose transformation of the economy with thousands of applications across every sector. If you applied Thompson’s or Aschenbrenner’s logic to the industrial revolution – which was also, by any measure, world-historically important – it would imply the government had the right to requisition any factory, dictate terms to any manufacturer, and destroy any business that refused to comply. That’s not how free societies handled industrialization, and it shouldn’t be how they handle AI.

People will say, ‘Well, AI will develop unprecedentedly powerful weapons – superhuman hackers, superhuman bioweapons researchers, fully autonomous robot armies, etc – and we can’t have private companies developing that kind of tech.’ But the Industrial Revolution also enabled new weaponry that was far beyond the understanding and capacity of, say, 17th-century Europe – we got aerial bombardment, and chemical weapons, not to mention nukes themselves. The way we’ve accommodated these dangerous new consequences of modernity is not by giving the government absolute control over the whole industrial revolution (that is, over modern civilization itself), but rather by coming up with bans and regulations on those specific weaponizable use cases. And we should regulate AI in a similar way – that is, ban specific destructive end uses (which would also be unacceptable if performed by a human – for example, launching cyber attacks).

But while AI has some similarities with the Industrial Revolution, there are also important differences. The Industrial Revolution only led to aerial bombardment and chemical weapons after a massively distributed process spanning several centuries. That gave governments ample time to act. By contrast, many people think individual AI companies can develop extremely powerful capabilities in a matter of years. If so, government intervention may have to be more proactive.

Tenuous connections

Many of the arguments in this debate are very broad, and the link to the dispute between Anthropic and the Pentagon is sometimes tenuous. The state’s monopoly on violence and safety regulation of AI are important questions, but this episode sheds less light on them than it might appear. I think the debate would gain if the arguments became more specific.

Meanwhile, AI doomerism in China is completely absent not because of naivete, but because they have better systems to absorb them.

https://nathankyoung.substack.com/p/notes-on-the-cultural-revolution?r=2kp7ol

One hates to agree with Hegseth or anyone in the Trump administration. Companies make things and sell them, but they do not constrain the user's use of the product. How can they. In this case the vendor is like a consultant or a temporary employee. They may refuse to do certain things. That is their right. The customer is free to fire the temp or end the contract. What is wrong is declaring Anthropic a security risk. At least let them appeal that designation.

Bottom line: when the electorate comes to their senses, Hegseth and his boss will be gone I hope.